Hate Speech

What is Hate Speech?

In common language, “hate speech” refers to offensive discourse targeting a group or an individual based on inherent characteristics (such as race, religion or gender) and that may threaten social peace. According to Black's Law Dictionary, 9th Edn., Hate speech is defined as “speech that carries no meaning other than the expression of hatred for some group, such as a particular race, especially in circumstances in which the communication is likely to provoke violence.”[1]

The 1997 Recommendation of the Council of Europe Committee of Ministers on Hate Speech (CM Recommendation) provided its first definition of hate speech, stating it as follows: “all forms of expression which spread, incite, promote or justify racial hatred, xenophobia, anti-Semitism or other forms of hatred based on intolerance, including intolerant expression by aggressive nationalism and ethnocentrism, discrimination and hostility against minorities, migrants and people of immigrant origin.”

Official Definition of Hate Speech

Hate Speech as defined in Indian legislation

Hate speech has not been defined in any law in India. However, legal provisions in certain legislations prohibit select forms of speech as an exception to freedom of speech. The Courts have time and again advocated that persons affected by hate speech take recourse to provisions laid down in the penal and other laws. Some laws which may provide remedies against hate speech are listed below

Legal provisions relating to hate speech

Article 19 of the Constitution of India (‘Constitution’) guarantees freedom of speech and expression as a fundamental right. Article 19(2) of the Constitution allows for reasonable restrictions on the freedom of speech and expression in the interests of sovereignty and integrity of India, security of the state, friendly relations with foreign states, public order, decency or morality, contempt of court, defamation, or incitement to an offense.

Reasonable restrictions on Article 19 have enabled the existence of provisions and laws restricting hate speech, such as Section 196 of Bharatiya Nagrik Sanhita, 2023 (BNS) (erstwhile Section 153A of IPC) and other legislations such as the Protection of Civil Rights Act (1955), the Representation of the People Act (1951), and the Scheduled Castes and the Scheduled Tribes (Prevention of Atrocities) Act (1989). Reasonable restrictions on freedom of speech are permissible under Article 19 in so far as such restrictions are in the interest of the sovereignty and integrity of India, the security of the State, friendly relations with foreign States, public order, decency or morality, or in relation to contempt of court, defamation or incitement to an offence.

Bharatiya Nagrik Sanhita, 2023 (BNS)

Acts done to promote enmity between different groups, on the grounds of, inter alia, religion, race, place of birth, residence, language, caste

Hate speech has been defined under Section 196 of Bharatiya Nagrik Sanhita, 2023 (BNS) under the head 'promoting enmity between different groups on grounds of religion, race, place of birth, residence, language, etc., and doing acts prejudicial to maintenance of harmony.' Section 196 BNS criminalises acts done to promote enmity between different groups, on the grounds of, inter alia, religion, race, place of birth, residence, language, caste. Punishment under this sub-section is imprisonment of up to 3 years, a fine, or both. This section provides three clauses to explain ways in which this offence can be committed.

- Clause (a) of Section 196(1) BNS specifies ways in which enmity can be promoted which are as follows: by spoken or written words, or by signs or visible representation, or through electronic communication. For the purposes of this clause, the grounds on which enmity can be promoted are religion, race, language, residence, or caste, among others.

- Clause (b) of Section 196(1) BNS criminalises acts that are detrimental to societal harmony, and which lead to or are likely to lead to public disturbance. It focuses on actions that are likely to breach peace or cause disruptions within society.

- Clause (c) of Section 196(1) BNS prohibits conduct such as organising or participating in exercises, drills, or movements intended for or likely to involve participants being trained in violence or criminal force against specific groups. It also takes into account if such actions can cause fear, alarm, or insecurity among members of the targeted groups. These targeted groups are identified on the grounds which are specified in the above clauses.

Section 196(2) addresses aggravated scenarios by prohibiting acts in sub-section (1) that occur in places of worship or during religious ceremonies. The punishment under this sub-section is imprisonment of up to 5 years, and a fine.

The erstwhile Section 153A of Indian Penal Code[2] did not include electronic communication as a means for promoting enmity. Whereas section 196(1), Bharatiya Nagrik Sanhita (BNS) includes this making the section more comprehensive, given the rise in hate speech practiced online or through other electronic mediums.

Acts that could lead to communal discord.

Section 298 BNS aims to prevent acts that could incite religious tension or violence by protecting places of worship and religious symbols from desecration. It criminalises acts that could lead to communal discord. The punishment for this offence includes imprisonment for up to two years, or a fine, or both, depending on the severity of the act and the discretion of the court. It prohibits the following acts done with the intention to insult the religious sentiments of any class of people.

- Destruction, damage, or defilement: This refers to any act of causing harm to religious property, including the physical destruction or defilement (such as desecration) of a place of worship or religious symbols and objects.

- Intention to insult: The offence occurs when the act is carried out with the specific purpose or intention to insult the religion of a particular group of people. For example, destroying a religious idol or holy book to provoke anger or disrespect against that religion.

- Knowledge that it is likely to insult: If the person performing the act knows that their actions are likely to be perceived as an insult to the religion of others, even if that was not their primary intention, they can still be held liable.

This section has undergone minor changes as compared to erstwhile Section 295A IPC. Section 295A IPC focused on deliberate and malicious acts that insult the religious beliefs of a class of people, with a higher punishment (up to three years imprisonment). Section 298 BNS deals with wounding the religious feelings of an individual or a group, with a lighter punishment (up to one year imprisonment).

Public Mischief

Section 353 BNS (erstwhile Section 505 of IPC) punishes those who spread false statements, Rumors, or reports to cause public mischief. The punishment can range from up to 3 years in jail (for general cases) to up to 5 years imprisonment (if done in a place of worship).

Information Technology Act, 2000

Section-66A of the IT act being declared unconstitutional had the potential to give online hate speech a free hand for proliferation, but the enactment of the 2021 IT rules, read with the various provisions of the IPC has been able to effectively control online hate speech.

Section 66A of Information Technology Act, 2000 The Information Technology (Amendment) Act, 2008 introduced section 66A into the IT Act (declared viod-ab-initio in Shreya Singhal v. Union of India (2015). Under section 66A of Information Technology Act, 2000 publication of material which ‘is grossly offensive or has menacing character’, or which is broadcast, despite being known to be false, for the purpose of ‘causing annoyance, inconvenience, danger, obstruction, insult, injury, criminal intimidation, enmity, hatred or ill will’, is prohibited. This provision was intended to protect women from cyber crimes such as vulgar mobile phone messages. In effect, it criminalised ‘offensive speech’ online. Section 66A granted wide powers to the government to make arrests to protect against annoyance, inconvenience, danger, obstruction, insult, injury, criminal, intimidation, or ill-will from information shared online. In Shreya Singhal v. Union of India, declaring that s 66A was ‘unconstitutionally vague’, for it failed to provide limits on the government’s power. The Court held that the provision did not fall within the reasonable exceptions of the freedom of speech and expression. It declared the provision to be ‘void ab initio’- meaning it should be treated as though it never existed. However, Section 66A continues to be used to file FIRs and make arrests across the country. No amendment has been enacted to remove it from the IT Act.In 2021, the Ministry of Home Affairs issued an advisory to States and Union Territories to direct all police stations not to register cases under the ‘repealed’ s 66A.

Intermediary Liability

Section-69A of the Information Technology Act empowers the central government to block any content or website by means of binding directions issued to social media intermediaries. Also, intermediaries who merely provide a platform for sharing of information are also protected from liability arising out of hate speech posted on them under Section-79 of the Information Technology Act. Furthermore, in 2021 the governemnt notified Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 has made several provisions for regulating content, including hate speech posted online. It achieves the control of such material by putting the onus to do so on the social media platform hosting the content.

Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021

Rule-3 of Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, provides for due diligence to be undertaken by intermediaries stipulates that the prominently displayed rules and regulations of the intermediary must suitably inform the users that they shall not transmit or publish any information, which among other things, is in violation of any law, threatens the unity and integrity of the nation or causes injury to any person. Under Section 79(3) of the Information Technology Act, the intermediary is required to take action against such information if and when notified by the government. The action must be taken as early as possible to remove the information and should not be delayed beyond 36 hours from the receipt of information.

Further, in order to aid tracking of users who post content on the social media platform, the intermediary is required to keep a record of all information known about its users for a period of 180 days after cancellation of the registration of such user. If required by the government or police, the information must be furnished within 72 hours for the purpose of investigation. When the intermediary is a “significant social media intermediary”, there are further measures that need to be followed, which includes appointing officers for compliance and giving notice to social media users whenever action is taken. Under rule-16, the government is also authorized to block information if such action is required to be taken expeditiously.

Representation of the People Act, 1951

Section 8 of Representation of the People Act, 1951 disqualifies individuals convicted of offenses involving hate speech from contesting elections. Section 123(3A) provides for identification of promotion of enmity on grounds of religion, race, caste, community, or language during election campaigns as a corrupt practice. Section 125 prohibits hate speech aimed at promoting enmity to affect elections.

Religious Institutions (Prevention of Misuse) Act, 1988

Section 3(g) of the Religious Institutions (Prevention of Misuse) Act, 1988 prohibits the use of religious premises for promoting enmity or hatred between different groups on religious, racial, linguistic, or regional grounds. Section 7 provides that the manager and every person connected with such contravention shall be punishable with imprisonment for a term which may extend to five years and with fine which may extend to ten thousand rupees

Scheduled Castes and Scheduled Tribes (Prevention of Atrocities) Act, 1989

Section 3(1)(r) of The Scheduled Castes and Scheduled Tribes (Prevention of Atrocities) Act, 1989 [SC ST Act] states that one who intentionally insults or intimidates with intent to humiliate a member of a Scheduled Caste or a Scheduled Tribe in any place within public view shall be punished with imprisonment of not less than 6 months that can extend up to 5 years.

Protection of Civil Rights Act, 1955

Section 7 of Protection of Civil Rights Act, 1955 penalizes incitement to, and encouragement of untouchability through words, either spoken or written, or by signs or by visible representations or otherwise.

Hate Speech as defined in International Instruments

International Covenant on Civil and Political Rights (ICCPR)

The International Covenant on Civil and Political Rights (ICCPR) establishes an important normative foundation for regulating hate speech. Article 20(2) requires States Parties to prohibit by law any advocacy of national, racial, or religious hatred that constitutes incitement to discrimination, hostility, or violence. At the same time, Article 19 protects freedom of expression, subject to restrictions that are necessary for respect of the rights or reputations of others, national security, public order, or public health or morals.

To assist in interpreting these provisions, the United Nations developed the Rabat Plan of Action, which proposes a six-part threshold test to determine when speech reaches the level of incitement requiring legal prohibition. The test considers context, speaker, intent, content and form, extent of dissemination, and likelihood and imminence of harm. This framework seeks to distinguish offensive expression from speech that poses a genuine risk of discrimination or violence.

International Convention on the Elimination of All Forms of Racial Discrimination (ICERD)

The International Convention on the Elimination of All Forms of Racial Discrimination (ICERD) adopts a stronger stance against racist expression. Article 4 requires States Parties to criminalise the dissemination of ideas based on racial superiority or hatred, even in the absence of direct incitement to violence. This reflects an emphasis on protecting dignity and combating structural discrimination. Comparative scholarship has observed that some domestic legal frameworks may not fully align with ICERD’s broader prohibition of racially discriminatory speech. Article 4(a) of the International Convention on the Elimination of All Forms of Racial Discrimination, whereby States Parties shall ‘declare an offence punishable by law all dissemination of ideas based on racial superiority or hatred, incitement to racial discrimination, as well as all acts of violence or incitement to such acts against any racial or group of persons of another colour or ethnic origin’

Hate Speech as defined in Official Documents

State Legislative Bills

Karnataka Hate Speech and Hate Crimes (Prevention) Bill, 2025

The Karnataka Hate Speech and Hate Crimes (Prevention) Bill, 2025[3] represents a state-level attempt to address what commentators describe as an “enforcement gap” in India’s existing criminal framework, particularly under the Bharatiya Nyaya Sanhita (BNS), which largely penalises hate speech on an individual, case-by-case basis. The Bill seeks to introduce a more structured and comprehensive regime by defining hate speech and hate crimes separately, prescribing stringent penalties, making offences cognizable and non-bailable, and providing mechanisms for victim compensation and removal of harmful content.

One of the key features of the Bill is the expansion of the definition of hate speech to explicitly include online content and digital communications. This encompasses a wide range of digital expressions, including social media posts, forwarded messages, memes, videos, and even private messages that are widely disseminated. The definition goes beyond the traditional legal understanding of hate speech centred on incitement to violence to include content that promotes discrimination, hostility, or marginalization based on religion, caste, race, gender, sexual orientation, or political beliefs. While this broad scope attempts to capture the subtleties of modern online hate, it also raises concerns about vagueness and potential misuse. The Bill further categorises hate speech as a non-bailable and non-cognizable offence, thereby placing it in the category of serious criminal acts. This means that the accused cannot obtain bail as a matter of right and that the police cannot arrest without prior approval of a magistrate. The purpose of this classification is to reflect the severity of hate speech in causing communal unrest, psychological harm, and social disruption. However, critics argue that this criminalisation framework, especially without a clear statutory threshold for what constitutes hate speech, may stifle dissent and be used as a tool for political vendetta. A particularly notable and controversial provision of the Bill is its extension of legal liability to digital intermediaries, such as social media platforms and internet service providers. These platforms are required to take down flagged hate content within a specified period (typically 24–36 hours) from the time they are notified by the state authorities. Failure to comply may result in significant fines, civil liability, and even suspension of operations within the state. This move builds upon the framework under the IT Rules, 2021, but imposes stricter obligations, especially in terms of compliance timeframes and penalties. Moreover, the Bill introduces state-level authority for regulating digital content, which raises significant federalism concerns given that the regulation of telecommunication and internet services falls under the Union List of the Constitution. Perhaps the most contentious element of the Bill is the inclusion of private communications within its scope. The Bill provides that if a private digital message (for example, sent via WhatsApp, Signal, or Telegram) is made public, either through forwarding, screenshotting, or broadcasting, and contains hate content, the originator of the message may be held criminally liable. While the objective is to track and penalize the origin of viral hate campaigns that often begin in closed groups, this provision has raised serious privacy concerns, particularly in the wake of the Supreme Court’s landmark recognition of the right to privacy as a fundamental right in Justice K. S. Puttaswamy v. Union of India (2017).[22] This provision may potentially lead to increased surveillance or backdoor traceability demands from encrypted platforms, raising alarms about state intrusion into private life.[4]

Private Member Bills introduced in Rajya Sabha

The Hate Speech and Hate Crimes (Prevention) Bill, 2022

The Hate Speech and Hate Crimes (Prevention) Bill, 2022[5] was introduced in the Indian Parliament by K.R. Suresh Reddy, to address hate speech and hate crimes. It defines "hate speech" as expressions that incite, justify, promote discrimination, hatred, hostility, or violence, or denigrate persons/groups based on protected characteristics including religion, race, caste, sex, gender identity, sexual orientation, place of birth, national/ethnic origin, language, age, or disability. It establishes "hate crimes" as offenses motivated by prejudice against these traits, with graded punishments: up to 7 years imprisonment plus ₹1 lakh fine for hurt; up to 10 years plus ₹3 lakh for grievous hurt; life imprisonment plus ₹5 lakh for death; and separately criminalixes mob violence/lynching with proportionate penalties.

The Hate Crimes and Hate Speech (Combat, Prevention and Punishment) Bill, 2022

The Hate Crimes and Hate Speech (Combat, Prevention and Punishment) Bill, 2022 [6] was introduced by Prof. Manoj Kumar Jha before the Rajya Sabha on 9th December 2022. It defines "hate speech" as any act that incites, justifies, promotes, or spreads discrimination, hatred, hostility, or violence against persons or groups based on protected characteristics such as religion, race, caste, sex, gender identity, sexual orientation, place of birth, national/ethnic origin, language, age, or disability. Hate crimes are ordinary offenses motivated by such prejudice, with enhanced punishments: up to 7 years imprisonment and ₹1 lakh fine if causing hurt; up to 10 years and ₹3 lakh for grievous hurt; life imprisonment and up to ₹5 lakh if resulting in death. Provisions of Bill are developed in a victim-cntered manner which considers the impact of offence on the victim and prospects to secure convictions, where serious crimes are committed. It empowers District Magistrate to issue orders on apprehension of breach of peace or creation of discord between members of different groups, caste or communities and provides a mechanism for promoting awareness to prohibit, prevent and combat hate crimes and hate speech.

The Online Hate Speech (Prevention) Bill, 2024

The Online Hate Speech (Prevention) Bill, 2024 seeks to address communal and religious hatred propagated through social media platforms. The bill proposes to criminalise the publication, distribution, or performance of speech online that spreads or incites religious enmity or denigrates individuals on grounds such as religion, race, caste, community, sex, national or ethnic origin, language, or disability. Sahney argued that the existing framework under the Information Technology Act, 2000 is outdated in the context of contemporary social media, and emphasised the need for updated legislation to promote digital harmony, constitutional values, and equal dignity.

Documents by International Organizations

Parliamentary Toolkit on Hate Speech (Council of Europe)

The Council of Europe's Parliamentary Toolkit on Hate Speech[7] defines hate speech as generalised expressions directed at protected groups rather than specific individuals. Such speech includes statements or online content promoting conspiracy theories, racial superiority, or other derogatory narratives targeting communities defined by characteristics such as race, religion, or ethnicity. The focus is on harm to the group as a collective. By contrast, hate crime offences involve the commission of an underlying criminal act—such as harassment or intimidation where the conduct is motivated by, or demonstrates, hostility toward a victim based on protected characteristics. In jurisdictions with hate crime frameworks, the presence of bias may enhance the penalty, although the conduct would remain criminal even without the discriminatory element. The distinction is significant because the freedom of expression implications differ: hate speech regulation directly engages expressive activity, whereas hate crime laws primarily punish conduct, with bias operating as an aggravating factor.

Hate speech as defined in Case Laws

Promoting Enimity

Bilal Ahmed Kaloo vs State Of Andhra Pradesh

In Bilal Ahmed Kaloo vs State of AP (1997), the Supreme Court had held that merely hurting other people’s religious sentiments cannot amount to the crimes made Section 153B or Section 505 of the IPC. The Judgement stated:

“The common feature in both sections being promotion of feeling of enmity, hatred or ill-will “between different” religious or racial or language or regional groups or castes and communities it is necessary that at least two such groups or communities should be involved. Merely inciting the felling of one community or group without any reference to any other community or group cannot attract either of the two sections.”

Amish Devgan v. Union of India

In Amish Devgan v. Union of India , the Supreme Court reaffirmed that “the effect of the words must be judged from the standards of reasonable, strong-minded, firm and courageous men, and not those of weak and vacillating minds, nor of those who scent danger in every hostile point of view”. As for the intent aspect, the Court noted that the speech must “intend only to promote hatred, violence or resentment against a particular class or group without communicating any legitimate message. This requires subjective intent on the part of the speaker to target the group or person associated with the class/group”.13 The Court also emphasized that the freedom of speech may not be arbitrarily restrained by hate speech laws. The Court opined that defences of ‘good faith’ (in cases wherein speakers display prudence and caution with their expression or content) and ‘legitimate purpose’ (where the speech has some clear purpose other than just spreading hatred or inciting violence) were available to those accused of engaging in hate speech.

State of Karnataka v. Praveen Bhai Thogadia

The Court in State of Karnataka v. Praveen Bhai Thogadia upheld the State’s decision to impose a restriction on Praveen Bhai Thogadia, a political leader linked to a right-wing Hindu religious group. He was barred from participating in any gatherings within a particular district for a period of two weeks. The State’s rationale for this action was the “communally sensitive” nature of the district. Thogadia had recently delivered an “inflammatory speech” that incited communal tensions, and there was a strong likelihood that his presence would disrupt communal harmony. The Court affirmed that the restriction was justified, as it was aimed at maintaining public order, which in turn safeguarded secularism that is an obligation that the State must actively protect.

Pravasi Bhalai Sangathan v. Union of India [8]

The Court in Pravasi Bhalai Sangathan v. Union of India expressed caution about the difficulty of defining hate speech and limiting it to a manageable standard. It provided a working definition of hate speech, informed by both domestic and international law. The definition described hate speech as an attempt to marginalise individuals based on their membership in a group. It involves using expression that exposes the group to hatred, undermining their legitimacy in the eyes of the majority, and diminishing their social standing and acceptance. Hate speech, therefore, causes harm not only to individuals but can have broader societal impacts, obstructing the ability of a protected group to engage fully in public discourse and democracy. While examining existing legal provisions, the Court referred to the offence of sedition under Section 124A of the Indian Penal Code, which criminalises acts that bring or attempt to bring the government into hatred or contempt. The Court noted that while sedition law covers acts against the State, it does not equate to hate speech as defined judicially. The Court’s reference to sedition law unintentionally allowed the police to misuse it in cases involving alleged hate speech.

The court also analysed the issue and stated that Hate Speech marginalises individuals based on their identity that Hate Speech lays the foundation for attacks on the vulnerable people including violent ones. The Court stated as follows:

“Hate speech is an effort to marginalise individuals based on their membership in a group. Using expression that exposes the group to hatred, hate speech seeks to delegitimise group members in the eyes of the majority, reducing their social standing and acceptance within society. Hate speech, therefore, rises beyond causing distress to individual group members. It can have a societal impact. Hate speech lays the groundwork for later, broad attacks on vulnerable that can range from discrimination, to ostracism, segregation, deportation, violence and, in the most extreme cases, to genocide. Hate speech also impacts a protected group’s ability to respond to the substantive ideas under debate, thereby placing a serious barrier to their full participation in our democracy.”

Finally, the Court urged the Law Commission of India, which was already reviewing the powers of the Election Commission in relation to hate speech by political parties during election campaigns, to propose a clear definition of hate speech and make recommendations to Parliament.

Hate Speech as defined in official government reports

Law Commission report

The 267th Report of Law Commission of India (2017) characterises hate speech as expressions such as spoken, written, symbolic, or visual expression that aims to create fear, spread hatred, or encourage social violence. It is likely to harm individuals or groups based on identity factors like religion, caste, or language. Hate speech incites discrimination, hostility, or violence and undermines equality in society. It often marginalizes vulnerable groups, creating a discriminatory environment. [9] The Commission lays down a framework for the principles that need to be applied while dealing with hate speech, drawing upon developments in European and international law. The Commission stresses on the three-part test – 1) Is the interference prescribed by law? 2) Is the interference proportional to the legitimate aim pursued? 3) Is the interference necessary in a democratic society? These principles of necessity, proportionality and the requirement of limitations to free speech being prescribed by law and having a legitimate aim, underlie the ICCPR understanding of the freedom of speech, and the decisions of the European Court of Human Rights.

Viswanathan Committee 2015

The Viswanathan Committee 2015 [10] recommended the insertion of Sections 153C(b) and 505A into the IPC to criminalise incitement to offences on grounds of religion, race, caste, community, sex, gender identity, sexual orientation, place of birth, residence, language, disability, or tribe, prescribing a penalty of up to two years’ imprisonment and a fine of ₹5,000.

Bezbaruah Committee 2014 [11]

The Bezbaruah Committee 2014 [11] contemplated amendments to Section 153C IPC, dealing with acts against human dignity, enhancing the penalty to up to five years’ imprisonment and a fine, and to Section 509A IPC, concerning insults directed at a particular race, prescribing up to three years’ imprisonment or a fine.

International experiences

European Union

The European Convention on Human Rights protects freedom of expression under Article 10 but permits restrictions that are necessary in a democratic society to protect the rights of others or prevent disorder and crime. The European Court of Human Rights applies a case-by-case balancing approach, assessing proportionality and context. Speech that incites hatred or violence may fall outside protection, particularly where it undermines democratic values or human dignity.

The regulatory framework established by the EU for online hate speech incorporates a 'notice and action' process, detailed in Articles 16-18 of the Digital Services Act (DSA)[12], to manage the removal of illegal content. Upon receiving such notices, hosting services are required to act without undue delay, considering both the nature of the illegal content in question and the urgency of the action required. The importance of Article 16’s 'notice and action' mechanism extends further when considered in conjunction with Article 34 of the DSA. Article 34 stipulates that all large online platforms conduct risk assessment to identify and evaluate significant systemic risks arising from the operation and utilisation of their services within the EU. These assessments encompass various systemic risks, including the dissemination of illegal content, potential infringements on fundamental rights such as privacy, freedom of expression, and non-discrimination, and the intentional manipulation of services. The 'notice and action' mechanism is a critical tool for platforms to address one of the key systemic risks identified in these assessments: the dissemination of illegal content. The interplay between Article 16 and Article 34 underscores a comprehensive regulatory approach. Article 16 provides the operational means to tackle illegal content in real-time, while Article 34 ensures that platforms maintain an ongoing, proactive stance in managing and mitigating broader systemic risks. The Code of Conduct+ has been integrated into the framework of the DSA, as stipulated by Article 45 DSA, and will strengthen the way online platforms deal with content that EU and national laws define as illegal hate speech.

United Kingdom

The United Kingdom regulates hate speech primarily through the Public Order Act 1986 and the Racial and Religious Hatred Act 2006. These laws criminalise the use of threatening words or behaviour intended to stir up hatred on grounds such as race, religion, or sexual orientation. Intent and context are central considerations, and courts distinguish between offensive speech and speech that crosses the threshold into criminal incitement. In the UK, hate speech is defined as expressions of hatred toward someone on account of said person’s (perceived) race, nationality, religion, gender identity, sexual orientation or disability. Hate speech includes any communication that is threatening or abusive, and is intended to harass, alarm, or distress someone.

The Online Safety Act, 2023 in the UK represents a legislative endeavour aimed at fostering a safer digital environment by holding internet companies accountable for the content circulated through their platforms. The regulatory approach involves categorising responsibilities based on the legality of content, effectively establishing a framework that scrutinises content for compliance. As part of this framework, the Act mandates that platforms take measures to prevent children from accessing harmful content, such as content promoting self-harm, even if it is not illegal. Under the OSA, internet entities are mandated to adhere to a statutory duty of care, monitored by Ofcom, the United Kingdom’s independent communications regulator, which is set to take on regulatory powers to protect UK users from harms occurring on user- to-user services and search services

Germany

Germany maintains one of the most stringent hate speech regimes in Europe, shaped by its historical experience with Nazism and the Holocaust. Section 130 of the Criminal Code (Volksverhetzung) criminalises incitement to hatred, calls for violence, and attacks on human dignity directed at protected groups. Holocaust denial, Nazi propaganda, and the use of symbols of unconstitutional organisations are also prohibited.

Germany’s constitutional order places strong emphasis on human dignity under Article 1 of the Basic Law. The Network Enforcement Act (NetzDG) requires large social media platforms to remove manifestly unlawful content within specified timeframes or face substantial fines. Violations may result in fines or imprisonment, and even public insults can constitute criminal offences where they infringe personal honour.

United States

The United States adopts a markedly speech-protective model under the First Amendment to the Constitution. Unlike many European jurisdictions, the United States does not criminalise hate speech as such, instead favouring counter-speech and robust public debate as primary responses.[13] The Supreme Court of the United States has consistently held that hateful or offensive speech is generally protected unless it falls within narrow exceptions. The contemporary approach to hate speech in the US can be defined in a few words: freedom of speech is a fundamental constitutional right, which government may only restrict under certain prescribed circumstances. The Constitution grants the government some scope to regulate the 'time, place, and manner' of speech, but not the opinion expressed, no matter how hateful or despicable. An exception is made for 'true threats' or 'incitement to imminent lawless action'.

In Brandenburg v. Ohio (1969), the Court established that speech may only be restricted where it is directed to inciting imminent lawless action and is likely to produce such action. Earlier, in Chaplinsky v. New Hampshire (1942), the Court recognised limited categories of unprotected speech, including “fighting words” and true threats. In Matal v Tam, the Supreme Court reaffirmed that even offensive speech is protected. The Court noted that it has 'said time and time again that "the public expression of ideas may not be prohibited merely because the ideas are themselves offensive to some of their hearers".'

South Africa

South Africa adopts a dignity and equality centred approach grounded in its post-apartheid Constitution. Section 16 guarantees freedom of expression but expressly excludes advocacy of hatred based on race, ethnicity, gender, or religion that constitutes incitement to cause harm.

The Promotion of Equality and Prevention of Unfair Discrimination Act, 2000 (PEPUDA) prohibits speech that is harmful, promotes hatred, or incites harm on prohibited grounds. More recently, the Prevention and Combating of Hate Crimes and Hate Speech Act, 2023 (signed into law in 2024) criminalises the intentional publication or communication of material that incites harm or promotes hatred based on protected characteristics. Penalties may include fines or imprisonment of up to five years. The Act applies to electronic communications and includes limited exemptions for bona fide artistic, academic, scientific, or religious expression.

China

China regulates hate speech within a broader national security and social stability framework. Article 249 of the Criminal Law criminalises inciting ethnic hatred or discrimination, with penalties ranging from short-term detention to imprisonment of up to ten years. Media and online content are subject to extensive state oversight, including licensing requirements for internet service providers and monitoring of network traffic. Regulation extends across print, broadcast, and social media, with enforcement often linked to concerns about national unity and state authority.

Appearance in official databases

Parliamentary response

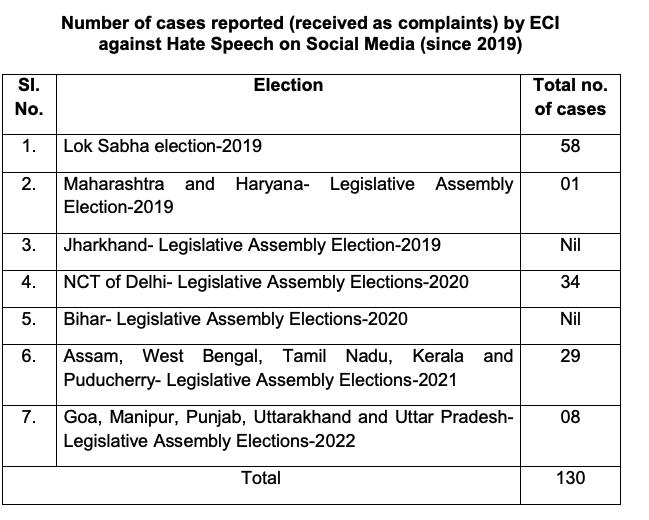

Lok Sabha, Unstarred Question No 3734, Hate Speech on Social Media

In response to a parliamentary question[14], the then Minister of Law and Justice, Kiren Rijiju, provided information on umber of cases reported (received as complaints) by ECI against Hate Speech on Social Media (since 2019)

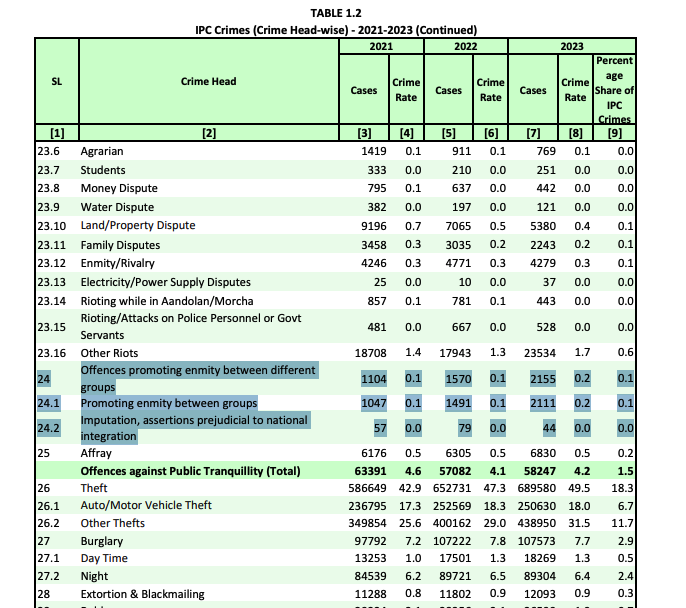

Crime in India report (NCRB)

The Crime in India report, published annually by the National Crime Records Bureau (NCRB), provides information related to Hate Speech under crime heads - Offences promoting enmity between different groups. It includes offences registered under sub head - promoting enmity between groups (Sec.153A & 153AA IPC) and Imputation, assertions prejudicial to national integration (Sec.153 B IPC)

Secondary Sources

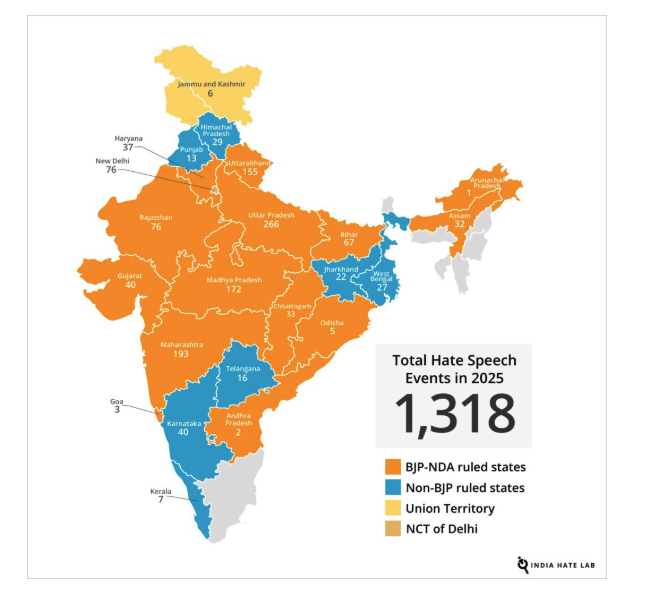

Hate Speech events in India (India Hate Lab (IHL), a project of Center for the Study of Organized Hate (CSOH).

The report Report 2025: Hate Speech Events in India provides a comprehensive, data-driven analysis of verified hate speech events targeting religious minorities in India throughout 2025. the study applies the Rabat Plan of Action’s six-part threshold test, derived from Article 20(2) of the ICCPR, to determine whether speech amounts to incitement to discrimination, hostility, or violence. The six factors assessed are:

- Context – the broader social and political environment.

- Speaker – the speaker’s influence, authority, and relationship to the audience.

- Intent – whether there was deliberate incitement (excluding mere negligence or recklessness).

- Content and Form – the nature of the language, rhetoric, and conspiratorial elements.

- Extent – the reach, frequency, and platforms used.

- Likelihood and Imminence – the probability and immediacy of resulting harm.

Methodologically, the study monitors activities of Hindu far-right groups and affiliated political actors across national and local levels, tracks hate speech incidents reported in media, and relies on a network of activists and journalists for documentation and evidence collection. It employs multilingual keyword-based data scraping across platforms such as Facebook, X, YouTube, Instagram, and Telegram to extract relevant content. Each video is rigorously authenticated by verifying location, date, and cross-referencing with at least two independent sources. Verified incidents are compiled into a structured database mapped by state, organisations involved, speaker identity, and affiliations. Finally, a detailed narrative analysis categorises content into overlapping thematic classifications to ensure systematic and methodical examination of hate speech patterns in India.

Research that engages with hate speech

Caste based hate speech in the digital sphere (S&MM)

The research report by Social & Media Matters argue that caste discrimination has adapted to new technological environments manifesting in networked and platform mediated forms. Digital caste hate often centres around anti-reservation narratives and opposition to the Scheduled Castes and Scheduled Tribes (Prevention of Atrocities) Act, 1989, accompanied by slurs such as “Aarakshanjeevi.” These expressions show resentment toward affirmative action policies and question the legitimacy and “merit” of Dalit advancement. The report cites Christophe Jaffrelot to demonstrate that caste continues to shape political mobilisation, economic access, and cultural identity, intersecting with religion, gender, and class. Despite constitutional guarantees and statutory protections, implementation gaps remain significant.

It further shows how digital transformation has intensified these dynamics. With over 850 million internet users and rapidly expanding social media penetration, platforms such as Facebook, Instagram, YouTube, and X (formerly Twitter) have become central sites of caste discourse. However, platform governance has historically lagged in recognising caste as a protected category. For example, caste was incorporated into hate speech policies on major platforms only between 2018 and 2020, reflecting delayed acknowledgment of caste-based discrimination within global moderation frameworks.

Ogburn’s “cultural lag” is used to explain this transformation. Technological development has outpaced normative and institutional adaptation, allowing entrenched caste hierarchies to reproduce themselves in new digital forms. Scholars therefore argue that addressing caste hate speech requires not only legal reform but also platform accountability, vernacular moderation strategies, and structural interventions that confront caste privilege embedded within both society and digital infrastructures.

Online misogyny in the times of digital feminism

In their paper titled "ONLINE MISOGYNY: A CHALLENGE FOR DIGITAL FEMINISM?", Kim Barker and Olga Jurasz argue that the ideal of an inclusive digital public sphere has increasingly been undermined by the proliferation of online misogyny and gender-based abuse.

Rather than serving as neutral facilitators of democratic exchange, they argue that digital platforms have become hostile environments for women, particularly those who are politically vocal or advocate for gender equality. Online violence against women (OVAW), including online violence against women in politics (OVAWP), manifests through misogynistic harassment, sexualised threats, doxing, image-based abuse, and coordinated trolling campaigns. Empirical studies indicate that a substantial proportion of women globally have experienced sexist or misogynistic abuse online, with political women and women from marginalised racial or religious backgrounds facing disproportionately high levels of hostility.

They give the example of Diane Abbott, the first Black woman elected to the UK Parliament, receiving thousands of abusive tweets during the 2017 UK General Election campaign. Similarly, women politicians such as Sushma Swaraj have faced coordinated online harassment, often combining misogyny with racialised or communal abuse. These incidents reflect a broader pattern: abuse is frequently directed at women as women, rather than as political actors, reinforcing entrenched gender hierarchies.

Movements such as #MeToo demonstrate the Internet’s emancipatory potential, yet they also provoke counter-mobilisations that weaponise digital tools to silence women. The rise of right-wing populist politics in contexts including the United States under Donald Trump and Brazil under Jair Bolsonaro has further amplified gendered rhetoric, contributing to echo chambers where misogyny is normalised and politically mobilised.

Important to their observation is how online abuse does not remain confined to the digital sphere. The murders of Jo Cox in 2016 and Marielle Franco in 2018 underscore the continuum between online threats and offline violence. Scholars therefore argue that OVAW and OVAWP are not merely issues of offensive speech, but threats to democratic participation and equality.

State sanctioned communal hate speech

In February 2026, reporting by Frontline documented public statements by Himanta Biswa Sarma targeting Bengali-speaking Muslims in Assam, particularly those labelled as “Miyas.” The term, though originally derived from Persian meaning “gentleman,” has acquired a pejorative and exclusionary meaning in Assamese political discourse, frequently used to imply illegality, demographic threat, and outsider status.

The rhetoric attributed to the Chief Minister included references to selective evictions, calls for social and economic harassment, and suggestions that electoral rolls could be revised to delete “Miya” votes. Such statements are significant not merely for their content, but because they originate from a constitutional office-holder. While the Indian Penal Code criminalises speech promoting enmity between religious groups (Section 153A) and acts intended to outrage religious feelings (Section 295A), enforcement remains inconsistent when political actors are involved.

This episode also shows an electoral strategy observable in several states, where anti-minority rhetoric is deployed to consolidate majoritarian sentiment, diverting attention from economic governance difficulties, and influence administrative machinery. Similar rhetorical patterns have appeared in speeches by Pushkar Singh Dhami, particularly in the context of “love jihad” and demographic anxieties. Normatively, the concern extends beyond offensive language. When state actors endorse or normalise exclusionary rhetoric, three consequences follow:

- Administrative bias - Bureaucratic enforcement (evictions, voter roll revisions, policing) may become selectively targeted.

- Democratic distortion - Minority political participation is chilled.

- Legitimisation of social hostility - Public incitement lowers the threshold for private violence.

The Internet, Internet Intermediaries and Hate Speech (SCRIPT-ed)

in the article "The Internet, Internet Intermediaries and Hate Speech,"[15] Natalie Alkiviadou examines the shift toward privatised online hate speech regulation, where social media platforms (SMPs) bear primary responsibility for content removal under laws like Germany's NetzDG. Alkiviadou employs doctrinal analysis of international human rights law (IHRL), such as ICCPR Article 20(2) and the Rabat Plan of Action, alongside ECtHR cases like Delfi AS v Estonia, and critiques empirical trends in platform moderation, including Facebook's high removal rates and AI biases against minority languages. Her key finding is that stringent obligations on SMPs—24-hour takedown deadlines and multimillion-euro fines—prompt over-censorship, silencing marginalised voices (e.g., LGBTQ+ reclamation terms) while enabling authoritarian abuse in countries like Turkey, without platforms being bound by IHRL proportionality tests. She recommends adhering to IHRL's high incitement thresholds, prioritizing human over AI moderation, ensuring judicial oversight, and rejecting broad definitions that include insults, to balance harm prevention with free expression.

Data challenges

Judicial restraint and legislative gaps

In Pravasi Bhalai Sangathan v. Union of India, the Supreme Court considered whether the Election Commission of India could de-recognise political parties whose candidates engaged in hate speech. The Court declined to frame judicial guidelines, emphasising that the issue required legislative intervention rather than judicial law-making. This reflected a broader institutional reluctance to expand hate speech regulation without statutory clarity.

Similarly, the 267th Report of the Law Commission of India underscored the difficulty of formulating a precise definition of hate speech. It warned that vague or overly broad provisions risk misuse and may disproportionately infringe the constitutional guarantee of free speech.

Constitutional balancing

Hate speech regulation in India operates within the framework of Article 19(1)(a) of the Constitution, which guarantees freedom of speech and expression, subject to reasonable restrictions under Article 19(2). At the same time, Articles 14, 15, and 21 safeguard equality, non-discrimination, and life and personal liberty. The central constitutional challenge lies in balancing free expression with the protection of vulnerable communities from speech that incites discrimination, hostility, or violence.

Enforcement deficits and selectivity

Scholars and commentators have consistently identified enforcement as a core challenge. In ‘Hate Speech’ Dilemma, Soli J. Sorabjee argued that while Indian criminal law penalises speech promoting enmity or insulting religious beliefs, its application is often selective. He cautioned that mere hurt sentiments cannot justify criminalisation and warned against the misuse of hate speech laws to suppress legitimate debate.

Similarly, in Hate Speech and Free Speech, A.G. Noorani contended that the principal problem is not legislative inadequacy but weak enforcement. Despite provisions such as Section 153A of the Penal Code, he observed that lack of political will has allowed communal rhetoric to proliferate.

India Hate Lab report has documented patterns of disproportionate targeting of minority communities, especially Muslims and Christians, raising concerns about both rising communal rhetoric and uneven application of existing laws.

State legislative reform and federalism concerns

Debates surrounding Karnataka’s proposed hate speech legislation illustrate tensions between strengthening deterrence and safeguarding civil liberties. Critics have questioned whether new statutes can remedy systemic policing deficiencies, and have raised concerns regarding overlap with central laws, federal competence, and the risk of broad discretionary powers enabling political misuse.

Digital hate culture

The rise of online platforms has transformed the scale and nature of hate speech. In The Ungovernability of Digital Hate Culture, Ganesh describes digital hate culture as networked communities that deploy discursive strategies to radicalise public discourse. Platform interventions, such as subreddit bans, have shown mixed effects, suggesting that content moderation alone may not dismantle the broader ecosystems sustaining online extremism.

Generative AI and emerging risks

The rapid expansion of generative AI technologies has introduced new regulatory complexities. Reports by the Center for the Study of Organized Hate and the Internet Freedom Foundation have documented the use of AI-generated synthetic images and videos that reinforce communal stereotypes, particularly targeting minority groups.

Concerns extend beyond users to AI systems themselves, with studies identifying algorithmic biases in outputs relating to caste and religious identities. Political actors have also reportedly deployed synthetic media in electoral campaigns, raising questions about attribution, liability, and electoral integrity.

Structural and regulatory constraints

Existing hate speech laws were drafted prior to algorithmic amplification and synthetic media. The speed, virality, and realism of AI-generated content complicate traditional legal categories such as intent, authorship, and platform responsibility. As a result, India’s regulatory framework faces challenges of constitutional balancing, legislative precision, consistent enforcement, and technological adaptation.

Way ahead

Expanding the ECI’s powers to regulate and act against hate speech during elections is crucial. Legislative amendments should empower the ECI to enforce stricter penalties and ensure compliance. Incitement of mob through hate speech at election times leads to polarization of communities,

he Supreme Court's directive to the Law Commission underscores the need for robust recommendations to guide Parliament. Clear and precise legal definitions can help bridge the gap between freedom of expression and protection against hate speech. BNS provisions lack a nuanced understanding of the actual problems that plague Indian polity.

The law must prioritize impartiality and fairness, addressing hate speech in all its forms while protecting vulnerable groups from undue persecution. Such measures are essential to foster trust in the legal framework and promote social harmony.

References

- ↑ Blacks Law 9th Edition; avalable at https://archive.org/details/blacks-law-9th-edition/page/1529/mode/1up?q=speech

- ↑ https://www.indiacode.nic.in/show-data?abv=CG&statehandle=123456789/2490&actid=AC_CEN_5_23_00037_186045_1523266765688§ionId=45894§ionno=153A&orderno=164&orgactid=AC_CG_61_334_00056_00056_1569320227809

- ↑ The Karnataka Hate Speech and Hate Crimes (Prevention) Bill, 2025 (LA Bill No 79 of 2025) (Karnataka Legislative Assembly, Sixteenth Legislative Assembly, Eighth Session). available at https://prsindia.org/files/bills_acts/bills_states/karnataka/2025/Bill79of2025KA.pdf

- ↑ https://www.smalegal.in/home/online-hate-speech-in-india-balancing-free-speech-and-regulation-a-legal-analysis

- ↑ The Hate Speech and Hate Crimes (Prevention) Bill, 2022 (Bill No CIX of 2022) as introduced in the Rajya Sabha on 8th December 2023. https://sansad.in/getFile/BillsTexts/RSBillTexts/Asintroduced/Hate%20speech-%20KR-%20E%201219202350136PM.pdf?source=legislation

- ↑ The Hate Crimes and Hate Speech (Combat, Prevention and Punishment) Bill, 2022 (as introduced in the Rajya Sabha on 9 December 2022) Bill No. LXXIV of 2022 https://sansad.in/getFile/BillsTexts/RSBillTexts/Asintroduced/hate-91222-E12142022113024AM.pdf?source=legislation

- ↑ Parliamentary Assembly of the Council of Europe, Parliamentary Toolkit on Hate Speech, February 2023 https://rm.coe.int/handbook-parliamentary-toolkit-on-hate-speech/1680aa571c accessed 24 February 2026.

- ↑ https://indiankanoon.org/doc/92834016/

- ↑ https://cdnbbsr.s3waas.gov.in/s3ca0daec69b5adc880fb464895726dbdf/uploads/2022/08/2022081654-1.pdf

- ↑ https://www.thehindu.com/news/national/panel-to-define-offences-of-speech-expression/article34644940.ece

- ↑ 11.0 11.1 https://pib.gov.in/newsite/PrintRelease.aspx?relid=133765

- ↑ Regulation (EU) 2022/2065 of the European Parliament and of the Council of 19 October 2022 on a Single Market For Digital Services and amending Directive 2000/31/EC (Digital Services Act) https://eur-lex.europa.eu/eli/reg/2022/2065/oj/eng

- ↑ https://www.europarl.europa.eu/RegData/etudes/BRIE/2025/772890/EPRS_BRI(2025)772890_EN.pdf

- ↑ Lok Sabha, Unstarred Question No 3734, Hate Speech on Social Media (asked by Shri Ritesh Pandey, answered on 25 March 2022) https://sansad.in/getFile/loksabhaquestions/annex/178/AU3734.pdf accessed 24 February 2026.

- ↑ Natalie Alkiviadou, “The Internet, Internet Intermediaries and Hate Speech: Freedom of Expression in Decline” (2018) 15(1) SCRIPTed 1. available at https://script-ed.org/article/the-internet-internet-intermediaries-and-hate-speech-freedom-of-expression-in-decline/