Deepfakes

What are ‘Deepfakes’

Deepfakes are synthetic media created using Artificial Intelligence to generate or manipulate realistic images, videos and audio. It relies on advanced AI techniques, notably Generative Adversarial Networks (GANs) and auto encoders. GANs consist of two competing neural networks—one that generates fake content and another that attempts to detect it. This constant competition results in highly realistic but fabricated content that can be hard to detect. Deepfakes actually represent a subset of the general category of “synthetic media” or “synthetic content.” Synthetic media is generally defined to encompass all media which has either been created through digital or artificial means (think computer-generated people) or media which has been modified or otherwise manipulated through the use of technology, whether analog or digital. “Cheapfakes” are another version of synthetic media in which simple digital techniques are applied to content to alter the observer’s perception of an event. Deepfakes, along with automated content creation and modification techniques, merely represent the latest mechanisms developed to alter or create visual, audio, and text content. The key difference they represent, however, is the ease with which they can be made – and made well.[1]

Deepfakes is increasingly associated with misinformation campaigns, online fraud, political manipulation, identity theft, and gender-based violence, particularly the creation of non-consensual sexually explicit material targeting women.[2] The growing misuse, including misinformation, and other unlawful content capable of misleading users causing user harms, violating privacy, or threatening national integrity, prompted regulatory intervention like harm-linked thresholds and promoting provenance-based verification to balance innovation, accountability, and fundamental rights.

Official Definition of ‘Deepfakes’

Deepfakes are defined as Synthetically generated information within the Indian legislative framework. The definition expressly targets deepfakes and AI-generated impersonations, while carving out exceptions for routine editing, accessibility enhancements, academic and training materials, and good-faith formatting or technical corrections that do not materially alter content. By formally defining synthetically generated information and embedding it within the intermediary liability framework, the law establishes a structured mechanism to address deepfakes and AI-enabled impersonation. The definition’s emphasis on deceptive realism, coupled with its explicit exclusions for legitimate uses, illustrates an attempt to strike a balance between innovation and user protection.

‘Deepfake’ as Defined in Legislation(s)

The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021

Rule 2(1)(wa) of the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 (as amended in 2026)[3] adopts an edffect oriented definition to regulate “synthetically generated information”. This definition is significant because it focuses on deceptive potential rather than requiring proof of the specific technology used to create the manipulation.[4] It covers both high-end AI-generated deepfakes and low-tech manipulated media (“cheapfakes”) that achieve similar deceptive impact and specifically exclude benign and good-faith uses of technology from its scope.

'Synthetically generated information’ means audio, visual or audio-visual information which is artificially or algorithmically created, generated, modified or altered using a computer resource, in a manner that such information appears to be real, authentic or true and depicts or portrays any individual or event in a manner that is, or is likely to be perceived as indistinguishable from a natural person or real-world event; Provided that the purposes of this clause, an audio, visual or audio-visual information shall not be deemed to be ‘synthetically generated information’, where such audio, visual or audio-visual information arises from (a) routine or good-faith editing, formatting, enhancement, technical correction, colour adjustment, noise reduction, transcription, or compression that does not materially alter, distort, or misrepresent the substance, context, or meaning of the underlying audio, visual or audio-visual information; or (b) the routine or good-faith creation, preparation, formatting, presentation or design of documents, presentations, portable document format (PDF) files, educational or training materials, research outputs, including the use of illustrative, hypothetical, draft, template-based or conceptual content, where such creation or presentation does not result in the creation or generation of any false document or false electronic record; or (c) the use of computer resources solely for improving accessibility, clarity, quality, translation, description, searchability, or discoverability, without generating, altering, or manipulating any material part of the underlying audio, visual or audio-visual information;.[5]

- The carefully crafted exclusions demonstrate an effort to balance regulation with innovation. By exempting routine editing and accessibility-enhancing uses, the Rules avoid over-criminalising ordinary digital practices. Educational simulations, training materials, formatting tools, and good-faith improvements in clarity or translation are protected so long as they do not generate false records or materially misrepresent reality. This calibrated approach indicates that the objective is not to stifle creativity or legitimate AI use, but to address the specific risks posed by hyper-realistic synthetic impersonation and fabricated events.

- Pure text or written outputs, by themselves, are not SGI under Rule 2(1)(wa), since SGI is limited to audio/visual/audiovisual information. However, the amendments also insert Rule 2(1A) clarifying that, for specific provisions of the Rules, any reference to “information” used to commit an unlawful act shall include SGI. This ensures that unlawful acts involving synthetic audio/visual/audiovisual content are clearly covered, even where such SGI is accompanied by text (caption, description, message, post, etc.).[6]

The Information Technology amendment rules, 2026 for the regulation of synthetically generated information, to an extent, followed the EU Artificial Intelligence Act phraseology (Article 3(60))[7] in defining what constitutes ‘synthetically generated information’. However, what it disregards entirely is the difference between a ‘computer resource’ as defined under the IT Act and ‘AI System’ as defined under the EU AI Act. The amendment seeks to categorise all AI applications as ‘some sort of software/tool/application and thereby include them under the sweep of a computer system. The biggest issue with this sweeping is the failure to recognise that ‘AI is a system that is designed to operate at various levels of autonomy’, which a computer system is not capable of doing.[8]

Draft Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2025

The Draft Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2025[9] defined ‘synthetically generated information’ as information which is artificially or algorithmically created, generated, modified or altered using a computer resource, in a manner that such information reasonably appears to be authentic or true. The Draft rules make no meaningful distinction between applications where algorithmic generation, or modification creates genuine risks of deception or harm and benign uses where such risks are minimal or nonexistent. This definition may not be just limited to deepfakes and is far broader, allowing mere realistic depictions of ‘any person’ or event to fall within its ambit, without any standard of harm being stipulated. The blanket approach ignores context, purpose, and actual potential for harm.[10] The draft definition was modified and the notified definition (under the notified 2026 amendment rules) specifically includes audio, visual, or audio-visual information, which provides for a relatively more precise definition. Further, similar to the draft guidelines, the notified provision proscribes algorithmically or artificially created content that mirrors or replicates a natural person or a real-event.[11]

In sum, the 2026 Amendment Rules mark a pivotal development in India’s digital regulatory landscape. By formally defining synthetically generated information and embedding it within the intermediary liability framework, the law establishes a structured mechanism to address deepfakes and AI-enabled impersonation. The definition’s emphasis on deceptive realism, coupled with its explicit exclusions for legitimate uses, illustrates an attempt to strike a balance between innovation and user protection. As synthetic media technologies continue to evolve, this framework is likely to play a central role in shaping the legal response to digital deception, privacy violations, and AI-driven misinformation in India.

In the Indian Context, the Bharatiya Nyaya Samhita 2024, the IT Act 2000, and the POCSO Act have provisions for offences like online sexual harassment, stalking, voyeurism, transmitting sexually explicit material, etc. The IT Rules, 2021, mandates the intermediaries to take down non-consensual images within 24 hours of a complaint being raised.

In the Indian Context, the Bharatiya Nyaya Samhita 2024, the IT Act 2000, and the POCSO Act have provisions for offences like online sexual harassment, stalking, voyeurism, transmitting sexually explicit material, etc. The IT Rules, 2021, mandates the intermediaries to take down non-consensual images within 24 hours of a complaint being raised.

Information Technology Act, 2000

Cyber Offences against individuals

Identity Theft and Fraud

Section 66C of Information Technology Act, 2000 penalise identity theft and Section 66D of Information Technology Act, 2000 provide for the offence of 'Cheating by Personation using Computer Resources' by prescribing imprisonment of either description for a term which may extend to three years and fine which may extend to one lakh rupees.

Privacy violation and Obscene content

Section Sections 67 and Section 67A of Information Technology Act, 2000 prohibit the publication or transmission of obscene or sexually explicit material in electronic form. Deepfake pornography, including AI-generated explicit videos of individuals who never engaged in the depicted acts, is considered a violation of these provisions. Section 67A, which specifically targets sexually explicit acts, is frequently invoked in FIRs involving manipulated content circulated via social media or messaging platforms.[12] These sections are often used cumulatively with Section 66E to address both the privacy violation and the obscene nature of the synthetic content.

Information Technology Rules, 2021

Periodic User Notification

The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 strengthen user obligations and require intermediaries to remind users of such obligations more frequently. The amendment to Rule 3(1)(c) of the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 now requires intermediaries to inform users at least once every three (3) months rather than once a year about the consequences of violating their rules, privacy policy, or user agreement. This information must be shared in a simple and effective manner, through the intermediary’s terms and conditions, rules, privacy policy, user agreement, or any other appropriate means, in English or any language listed in the Eighth Schedule to the Constitution.

Users must be clearly informed that:

- The intermediary may suspend or terminate access, or remove or disable non-compliant content, if its rules, privacy policy, or user agreement is violated.

- If the violation involves unlawful creation, publication, transmission, storage, or sharing of information, the responsible user may face penalties under the Information Technology Act, 2000 or other applicable laws.

- If the violation relates to a mandatorily reportable offence, such as under the Bharatiya Nagarik Suraksha Sanhita, 2023 or the POCSO Act, 2012, it will be reported to the appropriate authority in accordance with law.

Takedown of Unlawful Information

The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 (amended in 2026) require intermediaries to act much faster on receiving “actual knowledge” of any unlawful content present on the platform. If such knowledge is received through a court order or a reasoned notice from an authorised officer of the Appropriate Government or its agency, the intermediary must remove or disable access to the specified content within three (3) hours of receiving such order or notice. Earlier, intermediaries had thirty six (36) hours to comply; this has now been reduced to three (3) hours.

The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 (amended in 2026) also prescribe timelines for intermediaries to address user grievances and act upon specified categories of content as:

| Action(s) | Previous Timeline | Revised Timeline (Under 2026 amended Rules) |

|---|---|---|

| Resolution of user grievances | Within 15 days of receiving the complaint | Within 7 days of receiving the complaint |

| Takedown of specified harmful content (e.g., obscene content, content harmful to children) | Within 72 hours of reporting | Within 36 hours of reporting |

| Removal of intimate or impersonation content (e.g., content exposing private areas, depicting nudity or sexual acts, or involving impersonation including morphed or manipulated images) | Within 24 hours of receiving the complaint | Within 2 hours of receiving the complaint |

Due Diligence by Intermediaries offering Computer Resource for creation of Synthetically Generated Information

Rule 3 of the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 (amended in 2026) the IT Rules to introduce comprehensive due diligence requirements for intermediaries offering computer resources that enable, permit, or facilitate the creation, generation, modification, alteration, publication, transmission, sharing, or dissemination of SGI, in the following manner

Deployment of preventive technical measures

The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 (amended in 2026) introduce a new requirement mandating intermediaries to deploy reasonable and appropriate technical measures, including automated tools or other suitable mechanisms, to prevent users from creating, generating, modifying, altering, publishing, transmitting, sharing, or disseminating SGI that violates any law, including the IT Act, Bharatiya Nyaya Sanhita, 2023, POSCO Act 2012, and Explosive Substances Act, 1908. Specifically, intermediaries must prevent the creation or dissemination of SGI that:

- Contains child sexual exploitative and abuse material, non-consensual intimate imagery content, or is obscene, pornographic, paedophilic, invasive of another person's privacy (including bodily privacy), vulgar, indecent or sexually explicit;

- Results in the creation, generation, modification or alteration of any false document or false electronic record;

- Relates to the preparation, development or procurement of explosive material, arms or ammunition; or

- Falsely depicts or portrays a natural person or real-world event by misrepresenting, in a manner that is likely to deceive, such person's identity, voice, conduct, action, statement, or such event as having occurred.

Labelling and metadata requirements

The Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 (amended in 2026) require intermediaries to ensure that such SGI is prominently labelled in a manner that ensures “prominent visibility” of disclosure, that is easily noticeable and adequately perceivable, or, in the case of audio content, through a prominently prefixed audio disclosure. The labelling must allow immediate identification of such information as having been synthetically generated, created, modified or altered using a computer resource (for example, a lawful SGI or AI-generated video should carry a visible 'synthetically generated' notice).

Unlike the Draft Rules, which required labels to cover at least ten (10) percent of the surface area of visual content or, in the case of audio content, during the initial ten (10) percent of its duration, the Notified Rules provide intermediaries with discretion to determine how to prominently label such content. Additionally, the Notified Rules require SGI to be embedded with permanent metadata or other appropriate technical provenance mechanisms, to the extent technically feasible, including a unique identifier, to identify the computer resource of the intermediary used to create, generate, modify or alter such information.

Prevention of removal or suppression

Intermediaries are required to prevent any modification, suppression or removal of the label, permanent metadata, or unique identifier displayed or embedded in the SGI. The Draft Rules requirement to cover at least ten (10) percent of the surface area of visual content or, in the case of audio content, during the initial ten (10) percent of its duration, was highly criticised by stakeholders and industry participants. The rationale behind requiring 10% of the content to be covered by the identifier appeared arbitrary, and applying a uniform threshold across varying lengths and formats of content was considered impractical and disruptive to user experience.

This requirement, coupled with the wide definition of SGI under the Draft Rules, which covered a broad range of benign content, could have caused extensive labelling of content and risked desensitising users and creating notification fatigue. The Notified Rules have addressed this concern by granting intermediaries discretion to determine how to "prominently" label visual content or prefix audio disclosures. While this is a positive development for industry providing flexibility to intermediaries to determine how content should be labelled, it introduces interpretive challenges regarding what constitutes sufficient prominence, potentially leading to inconsistent implementation across platforms.

Additionally, the obligation on an intermediary for labelling and embedding of metadata or identifiers is limited to the SGI created or modified using its own computer resource (for example, an AI-powered image or video generation tool offered by the intermediary). It may therefore be interpreted that an intermediary is not required to label SGI that was created elsewhere and merely hosted on its platform. However, while this interpretation may provide some operational flexibility with respect to labelling and metadata or identifiers embedding requirements, it should not be construed as relieving intermediaries of their other statutory obligations under the IT Rules including the obligation to takedown unlawful content, grievance redressal timelines, and other due diligence obligations in respect of SGI hosted on their platforms, irrespective of whether such content was created using their own computer resources. Additionally, the blanket requirement for embedding SGI with permanent unique metadata or identifiers remains a heavy compliance burden.

Additional Due Diligence requirements for Significant Social Media Intermediary (SSMI)

The Notified Rules propose enhanced due diligence obligations for SSMIs that enable users to display, upload, or publish information on their platforms. As defined under the IT Rules, a SSMI refers to a social media intermediary having more than fifty (50) lakh registered users in India, where a “social media intermediary” is an intermediary that primarily or solely enables online interaction between users and allows them to create, upload, share, disseminate, modify, or access information through its services. The Notified Rules require SSMIs to observe the following measures: 1. Prior to displaying, uploading, or publishing any information, the SSMI must require users to declare whether the information is synthetically generated. 2. The intermediary must deploy reasonable and appropriate technical measures, including automated tools or other suitable mechanisms, to verify the accuracy of such declarations, taking into account the nature, format, and source of the information. 3. Where such declaration or technical verification confirms that the content is synthetically generated, the intermediary must clearly and prominently display an appropriate label or notice indicating that the content is synthetically generated.

The Notified Rules clarify that if it is established that the SSMI knowingly permitted, promoted, or failed to act upon SGI in violation of the Notified Rules, it would be deemed to have failed to exercise due diligence and hence risk losing the safe harbour protection under Section 79 of the IT Act. For clarity, the Notified Rules specify that the responsibility of a SSMI extends to taking reasonable and proportionate technical measures to verify user declarations and to ensure that no SGI is published without the required declaration or label. These obligations were initially proposed in the Draft Rules and despite industry concerns regarding practical implementations, challenges and technical limitations of verification tools, have been retained in the Notified Rules without substantive modification. Considering the heightened obligations of SSMIs, in practice, SSMIs will need to deploy verification tools tailored to the nature, format, and source of uploaded information. For instance, when a user uploads a video, the platform's automated system may analyse metadata, visual patterns, and audio signatures to verify whether the content is synthetically generated. If the user declares the content as synthetically generated, or if the technical measures confirm it as such, the platform must apply a clear and prominent label such as "This video was created using AI" or "Synthetically generated content" before allowing publication. Furthermore, the liability implications of these requirements are significant. For example, if a user uploads deepfake content depicting a public figure making false statements that clearly violates the prohibition on SGI by falsely portraying a natural person in a manner likely to deceive, and the SSMI's technical measures fail to detect this content due to limitations in the automated tools, or because the verification mechanisms were not sufficiently robust to evaluate the nature of such content, and the content is published without the required declaration or label, the SSMI risks losing its safe harbour protection. In such cases, the platform could be held liable for hosting unlawful content, facing potential legal action and penalties. Given these heightened obligations and liability risks, SSMIs should proactively invest in robust, scalable verification infrastructure that combines automated detection tools with human oversight mechanisms.

Bharatiya Nyaya Sanhita, 2023 (BNS)

Criminal Defamation

The malicious usage of deepfakes often results in harm and loss of reputation to the person against whom it is used. Section 356 of the Bharatiya Nyaya Sanhita, 2023 criminalises defamation that includes any visible representation that harms a person’s reputation.[13] Deepfakes created to portray individuals engaging in illegal, immoral, or socially damaging acts fall under this section, regardless of whether the underlying material is algorithmically synthesised.[14] Courts have treated manipulated or morphed media as capable of defaming individuals, and deepfakes logically extend this doctrine.[15] (For more information check wiki page on criminal defamation)

Organised Crime

The Bharatiya Nyaya Sanhita, 2023 added a new provision namely, “Organised Crime” under Section 111 [16] The clause leaves ambiguous terms like “cyber-crimes having severe consequences” open to interpretation, while defining “organised crime” in an exceptionally broad way, encompassing anything from economic offences to cybercrimes. It is the singular provision that relates to the term – “cyber-crimes” – and that as well, to a limited extent, may include various improvised offences relating to AI-facilitated technologies, like deepfakes.[17] It may criminalises participation in organised cybercrime groups, including coordinated networks involved in “deepfake-for-hire,” extortion rings using AI-generated sexual content, or professionalised operations producing impersonation videos for fraud. The provision is broad enough to include groups that develop, distribute, or monetise deepfake generation tools for criminal use.

Other Provisions

Similarly, threatening a person with any injury to him/any person in whom he has any interest, intending to cause alarm or cause him to do/not do something, constitutes criminal intimidation which is an offence under Section 351 of the Bharatiya Nyaya Sanhita, 2023. Deepfakes created as fabricated evidence, altered videos used to misrepresent events, or synthetically generated clips intended to resemble authentic recordings may qualify as “forged electronic records” under Section 336 of the BNS.[18] This includes manipulated CCTV footage, fake political speeches, or AI-generated confessions that could mislead investigative or judicial processes.[19]

Bharatiya Sakshya Adhiniyam, 2023

Section 63 of Bharatiya Sakshya Adhiniyam, 2023 deals with admissibility of electronic records requires electronic record authentication certificates, including hash and source verification, critical for deepfake evidence admissibility. These address privacy paradoxes, bolstering ethical and technological defences. ( For more information check wiki page on Electronic evidence)

Copyright Act, 1957

The existing copyright framework under Section 13, Copyright Act, 1957 protects original works covering artistic and literary works. Deepfakes however are created by the manipulation of existing images or likeness of individuals and may thus not fit within the traditional concept of copyright. Section 52 of Copyright Act, 1957 lists down an exhaustive list of acts that are not deemed infringing works.

Further, Section 57(1)(b) gives moral rights to the authors, namely, the right to integrity, in accordance with which authors of a literary work can claim damages or restraint the distortion, mutilation or modification of their work if it hampers their reputation. All these provisions only give the authors the ‘first ownership’ rights. The Copyright Act, 1957 could protect people from having their likenesses misused, but it doesn’t clearly recognise personality rights over one’s voice and image which makes it hard for victims to get help.

Personality Rights

The Right to Personality’s development in India, as is discussed below, can be broadly summarised into 2 aspects; first, privilege over commercial use, more popularly termed as the ‘right to publicity’ and second, right to privacy. Since deepfakes involve morphed images and voices, there is an automatic infringement of one’s privacy and personality rights. Unduly using one’s personality traits in creating deepfakes can lead to irretrievable damage to one’s reputation.The right to personality is an emerging concept and is at the nascent stage of development in India and other legal jurisdictions worldwide. No legislation in India explicitly mentions an individual’s right to personality, hence, this right has developed through judicial precedents.[20] (For more information check wiki page on Personality Rights)

Trademark Act, 1999

Section14 of Trademark Act, 1999 imposes restrictions on using personal names and representations. Further, deepfakes using well-known brand names or catchphrases may trigger liability under Section 29, Trade Marks Act, 1999, which addresses trade mark infringement or passing off. That said, the Trademarks Rules of 2017 and the Trademarks Act of 1999 are insufficient to handle the difficulties presented by personality trademarks. They do not acknowledge non-traditional personality traits like voice, gestures, or style, which are increasingly used in commercial contexts and in the field of artificial intelligence. A more thorough framework for safeguarding a person’s identity and preventing its unauthorised use would be provided by amending the Act to include these characteristics as ‘marks’ under Section 2(1)(m). These modifications would also strengthen enforcement mechanisms and make the personality trademark registration procedure more transparent.[21]

Digital Personal Data Protection Act, 2023 (“DPDP Act”)

The recently enacted Digital Personal Data Protection Act, 2023 (“DPDP Act”) ensures that personal data is processed lawfully by the Data Fiduciaries (including AI companies) with user consent and reasonable security safeguards. Section 6 of the DPDP Act 2023 mandates consent for personal data processing, classifying non-consensual deepfake use as personal data breaches with fines up to ₹250 crore, complementing Section 79 via data minimisation and fiduciary duties.

The Digital Personal Data Protection Act, 2023 (“DPDP Act”) although important for protecting personal data, does not address the training of AI models using scraped personal data without consent.[22] The DPDP Act also affixes a Data Principal’s responsibility under Section 15 of the DPDP Act. It restricts the widespread problem of impersonation. This is important in the present context as AI-generated media is frequently used to deceive people by impersonating another person. Data Fiduciaries can track the origin of a deepfake upon the receipt of a complaint and impose liability on the person involved in uploading it on their platform.[23]

IT rules

Beyond the definitional framework, the Amendment Rules translate this concept into concrete compliance obligations for intermediaries. Platforms that enable the creation, modification, or dissemination of synthetically generated information must deploy reasonable and appropriate technical measures to prevent unlawful synthetic content. Certain high-risk categories, such as non-consensual intimate imagery, child sexual exploitative material, false electronic records, deceptive impersonation, and fabricated depictions of real-world events, are subject to strict preventive obligations. Lawful synthetic content, where permitted, must be clearly and prominently labelled as synthetically generated and embedded with permanent metadata or provenance identifiers to ensure transparency and traceability.[24]

The Rules also introduce significantly tightened timelines for removal and grievance redressal, particularly in cases involving impersonation, morphed content, or intimate imagery. This reflects recognition of the speed at which deepfakes can spread online and the irreversible harm they may cause. Significant Social Media Intermediaries are further required to obtain user declarations regarding whether uploaded content is synthetic and to deploy technical verification measures prior to publication, thereby reinforcing platform accountability.[25]

Platform Accountability and Conditional Safe Harbour:

Legal Provision(s) Relating to ‘Deepfake’

Although Indian statutes do not explicitly employ the term “deepfake,” several provisions across the Information Technology Act, 2000 (IT Act) and the Bharatiya Nyaya Sanhita, 2023 (BNS) are routinely invoked in matters involving synthetic, morphed, or manipulated media.[26][27]In practice, deepfake-related First Information Reports (FIRs) tend to rely on offences addressing impersonation, obscenity, privacy violations, forgery of electronic records, extortion, and defamation, depending on the nature of the synthetic content and the harm caused.[28]

Government advisories and enforcement circulars further direct police and intermediaries to map deepfake harms onto existing statutory categories until a standalone legislative framework emerges.[29]

Information Technology Act, 2000

Section 66D – Cheating by Personation Using Computer Resources: Whoever, by means for any communication device or computer resource cheats by personating, shall be punished with[30][31]

– Violation of Privacy: Whoever, intentionally or knowingly captures, publishes or transmits the image of a private area of any person without his or her consent, under circumstances violating the privacy of that person, shall be punished with imprisonment which may extend to three years or with fine not exceeding two lakh rupees, or with both.[32][33]

Sections 67 and 67A – Obscenity and Sexually Explicit Content: Sections 67 and 67A of the IT Act

‘Deepfake’ as Defined in International Instrument(s)

International regulatory frameworks have begun articulating explicit definitions of “deepfakes” to govern the creation, distribution, and disclosure of AI-generated or AI-manipulated media. These frameworks typically adopt a technology-neutral but harm-oriented approach, combining definitional clarity with duties of transparency, labelling, and accountability for AI system providers and platforms.

This regulatory evolution is complemented by emerging scholarly analysis examining how deepfakes intersect with existing bodies of international law. One recent study explores whether synthetic media, when deployed in situations of armed conflict, can be accommodated within the framework of international humanitarian law. The paper argues that although no treaty instrument expressly defines or regulates “deepfakes,” their use may fall within established legal categories governing deception in warfare, such as lawful ruses or prohibited perfidy.The study highlights that international humanitarian law, particularly the Geneva Conventions and their Additional Protocols, regulates the effects and methods of deception rather than the technology itself. Accordingly, a deepfake used to mislead enemy forces may constitute a permissible ruse of war, whereas a deepfake that feigns protected status, such as surrender or humanitarian neutrality, could amount to perfidy and therefore be prohibited.In this way, the paper illustrates that even in the absence of explicit definitional provisions, existing international legal frameworks adopt a function-based and harm-oriented approach comparable to contemporary AI regulatory instruments. The legality of deepfakes depends not on their synthetic nature, but on context, intent, and consequence.[34]This analysis demonstrates both the adaptability and the limits of current international law. While established doctrines can absorb certain uses of synthetic media, the absence of explicit definitional clarity may create interpretive uncertainty, particularly where deepfakes target civilian cognition, democratic processes, or humanitarian protections.

Copyright 1957 may apply if use of the content to make a copy is prohibited. According to Article 51 of thePrivacy Act, if a person has exclusive rights over anything, it is illegal to use that content without that person's permission. This provides a legal way to deal with illegal issues related to deepfakes (JOLT, 2023).

India currently lives in the era of hyper-realistic artificial intelligence, or “deepfake,” which poses particular challenges to our ethical and legal systems. Deepfakes have the potential to transform storytelling and entertainment, but if used inappropriately they can cause serious damage and raise serious concerns about human rights, private data letters and the truth itself.

‘Deepfake’ as Defined in Official Document(s)

https://www.pib.gov.in/PressReleasePage.aspx?PRID=2154268®=3&lang=2

SOP

https://www.pib.gov.in/PressReleasePage.aspx?PRID=2188886®=3&lang=2

ECI 2025 advicory https://www.eci.gov.in/eci-backend/public/api/download?url=LMAhAK6sOPBp%2FNFF0iRfXbEB1EVSLT41NNLRjYNJJP1KivrUxbfqkDatmHy12e%2FzX%2FLARKC1lI3JwqUiIIk3e%2B7gOOlUZXPW%2BNP40Y7lTYHctZoALayD8g8gQjxekHZkSIq%2Fi7zDsrcP74v%2FKr8UNw%3D%3D. requiring political parties and candidates to identify and disclose synthetically generated campaign material.

CERT-In November 2024 Advisory https://www.cert-in.org.in/s2cMainServlet?pageid=PUBVLNOTES02&VLCODE=CIAD-2024-0060

Focussing on fraud, it recommends AI/ML detection tools by C-DAC, source verification, watermarking, and C2PA adoption, with 2025 trials ongoing.

https://www.meity.gov.in/static/uploads/2024/02/9f6e99572739a3024c9cdaec53a0a0ef.pdf

directing intermediaries to prevent unlawful or discriminatory AI use and to label synthetic outputs, the Draft Amendments seek to formalise platform duties for detecting, disclosing, and moderating such content, particularly when it may mislead users.

Advisory to Intermediaries Regarding Deepfakes (7 November 2023)

The Ministry of Electronics and Information Technology (MeitY) issued the advisory of 7 November 2023 in response to a widely circulated AI-generated video of actor Rashmika Mandanna, marking the first official governmental use of the term “deepfake” in India’s regulatory framework.[35] The advisory described deepfakes as manipulated or synthetic content produced using artificial intelligence that is capable of misleading users about identity, source, or authenticity. By situating deepfakes within the broader category of misinformation and harmful online content, MeitY clarified that such material falls under Rule 3(1)(b)(vii) of the IT Rules 2021, which prohibits content that impersonates another person, is patently false, or threatens public order.[36]

The document emphasised that deepfakes pose serious risks to individual dignity, privacy, and reputational security, and further warned that synthetic media could distort democratic discourse by circulating quickly through digital platforms and appearing convincingly authentic.[37] It directed intermediaries to enhance their detection mechanisms for manipulated media, improve user-friendly reporting pathways, and ensure swift removal or disabling of access to harmful deepfake content once notified. MeitY additionally encouraged platforms to experiment with technological safeguards such as labelling, provenance indicators, and automated authenticity verification systems, signalling the government’s expectation that intermediaries adopt increasingly proactive approaches to synthetic media governance.

Although the advisory did not create new legal obligations, its operational framing made clear that deepfake content would henceforth be evaluated under pre-existing due diligence requirements. This document therefore functioned as India’s first de facto definitional instrument, articulating how digital platforms should identify, moderate, and report synthetic media under the IT Act ecosystem.

Advisory No. 2(4)/2023-CyberLaws-2 (26 December 2023)

MeitY’s second advisory, issued on 26 December 2023, expanded the regulatory approach by shifting emphasis toward user comprehension, platform disclosure, and accountability mechanisms.[38] While reaffirming that deepfakes constitute harmful and deceptive synthetic media, the December advisory required intermediaries to translate their terms of service, prohibited-content policies, and community guidelines into major regional languages so that users clearly understand that creating or sharing deepfakes amounting to impersonation, obscenity, or fraud is a criminal offence under Indian law. This emphasis on regional-language accessibility marked a significant recognition of India’s linguistic diversity and the need for users across socio-economic backgrounds to understand the implications of participating in deepfake-related activities.

The document further required intermediaries to strengthen transparency reporting by providing disaggregated data on deepfake-related user complaints, platform takedowns, and the average time taken to act on such requests.[39] MeitY stressed that greater transparency would help build user trust, enhance institutional accountability, and provide essential data for future regulatory interventions. The advisory also reiterated the expectation that platforms develop proactive systems for detecting manipulated content, including automated tools and structured verification mechanisms, in addition to responding to user reports.

While both of these advisories do not possess statutory force, these advisories operate as authoritative interpretive guides for digital platforms, enforcement agencies, and courts on how deepfake content is to be addressed under the Information Technology Act and the IT Rules.

Subsequent government communications, including PIB press releases and Digital India publications, expand this view by describing deepfakes as AI-generated or AI-manipulated media that “alter, superimpose, or synthesise” a person’s face, voice, or likeness to create misleading audiovisual material.[40][41] These documents collectively treat deepfakes as a subset of “synthetic content” or “AI-generated content,” incorporating them into platforms’ obligations under Rule 3(1)(b) of the IT Rules relating to false information, impersonation, sexually explicit material, and content that may harm minors or jeopardise national security.

Private Member Bill

THE DEEPFAKE PREVENTION AND CRIMINALISATION BILL, 2023

‘Deepfake’ as Defined in Official Government Report(s)

Government committees in India, particularly parliamentary standing committees responsible for communications, information technology, and internal security, have expressly acknowledged deepfakes as a distinct and emerging threat to information integrity, democratic processes, public order, and individual privacy.[42]

22nd Report of the Standing Committee on Communications and Information Technology

The Committee’s 22nd Report, titled 'Review of Mechanism to Curb Fake News', provides one of the earliest parliamentary articulations of deepfakes as a sophisticated subset of fabricated information produced through advanced AI technologies.[43] The Report distinguishes deepfakes from conventional misinformation on the basis of realism, scalability, and the ability to manipulate public perception by fabricating highly convincing audiovisual content that appears authentic to ordinary viewers.

The Report underscores that deepfakes create heightened vulnerabilities during election cycles, noting that AI-manipulated political messages, speeches, or images can influence voter behaviour, exacerbate communal tensions, and destabilise democratic deliberation.[44] Accordingly, it recommends that social media platforms implement enhanced real-time monitoring protocols during sensitive political periods, including faster takedown obligations, proactive detection systems, and closer coordination with the Election Commission of India.

Accordingly, it recommends that social media platforms implement enhanced real-time monitoring protocols during sensitive political periods, including faster takedown obligations, proactive detection systems, and closer coordination with the Election Commission of India.

Among its structural recommendations, the Committee calls for the exploration of specialised legislation tailored to the creation, dissemination, and malicious use of deepfakes, observing that the IT Act 2000 and the Bharatiya Nyaya Sanhita 2023 lack a dedicated definitional and regulatory framework for AI-generated manipulated media.[45] It further proposes the development of a licensing or registration framework for developers of deepfake-generation tools and synthetic media applications, patterned on international best practices relating to high-risk AI systems.

The Report also emphasises platform accountability, noting the need for clear audit trails, forensic verification capabilities, and cooperation between digital intermediaries and law enforcement agencies.[46] Capacity-building measures, including training of cyber forensic laboratories, investment in detection technologies, and public awareness campaigns, are recommended as part of a multi-stakeholder strategy to counter the proliferation of deepfakes in India.

‘Deepfake’ as Defined in Case Law(s)

Indian courts, particularly the Delhi High Court, have begun recognising deepfakes within the legal frameworks of personality rights, privacy, misappropriation of identity, and unauthorised commercial exploitation. Although no Indian judgment has yet formulated a statutory or exhaustive judicial definition of “deepfake,” courts consistently treat AI-manipulated or synthetic representations of a person as an aggravated form of impersonation, passing off, and violation of dignity and reputation.[47][48] This emerging jurisprudence situates deepfakes within the established doctrines of privacy (as recognised in K.S. Puttaswamy v Union of India), publicity rights, and the right to control one’s persona, which Indian courts have increasingly treated as protectable interests in both commercial and non-commercial contexts.[49]

Deepfakes are therefore indirectly defined in Indian case law as any AI-generated or AI-altered media that appropriates, manipulates, or simulates an individual’s likeness, voice, or identity in a manner that creates confusion, violates autonomy, or commercially exploits reputation without consent.[50]

Thus, under Indian law, deepfakes are not treated as a separate legal category but are addressed through existing principles of privacy, personality rights, and protection against misrepresentation. A detailed discussion of these doctrines is provided in the separate section on Personality Rights.

https://www.europarl.europa.eu/thinktank/en/document/EPRS_BRI(2025)775855

https://www.europarl.europa.eu/RegData/etudes/BRIE/2025/775855/EPRS_BRI(2025)775855_EN.pdf

In Gaurav Bhatia v. Naveen Kumar and Ors., [11], deepfake defamatory videos of the plaintiff getting beaten up were circulated in the press and media. The Court noted that such videos not only caused harm to the plaintiff’s reputation but were also a persistent threat of being aired and used against the plaintiff in the future and, therefore, granted an injunction. Similarly, the Court has also upheld the personality rights [12] of celebrities, condemning the use of deepfakes without their consent and connivance as it could jeopardise their career and result in the misuse of such tools. [13]

In Neela Film Productions (P) Ltd. v. TaarakMehtakaooltahchashmah.com34, the Court noted that, even deepfake AI-generated content of fictional characters can construe infringement of copyright and trade mark of the characters registered to the media house. The Court also granted an ex parte ad interim injunction, restraining defendants and a John doe order to restrain all unknown parties including websites, e-commerce platforms, and YouTube channels from infringing Neela Film Productions’ copyright and trade mark rights. It is imperative to note that, while it may be possible to decide the law of the place which will apply to crimes on the basis of where the harm occurs (through the principle of lex loci delicti), it may become difficult in cases related to deepfakes that are created outside the jurisdiction of India.35 The laws related to crimes committed through artificial intelligence that are beyond the comprehension of existing recognised cybercrimes are unclear and will thus complicate its enforcement pursuant to Indian legal standards.36. https://www.scconline.com/blog/post/2025/11/08/deepfake-regulation-rights/

Although most initial deepfake instances have concerned celebrities, a ground-breaking ruling in the Ms. Kamya Buch case29 is a pivotal shift. The Delhi High Court judged on a nationwide cyberbullying campaign against Ms. Buch, a leading scholar and activist, with manipulated and profoundly humiliating materials, including pornographic deepfakes. The ruling was not based on the business value of her image but on a blatant and flagrant abuse of her essential rights to privacy, dignity, and reputation. This judgment redefines the legal war against deepfakes. It sets a strong precedent that this technology is not only an issue for public figures whose commercially valuable identity poses a problem, but a weapon of personal violation directed against all citizens. The court's emphasis on human dignity as a central legal justification puts the Indian judiciary at the apex of international legal scholarship on safeguarding fundamental rights against AI abuse.

International Experience

The European Union came up with its first – Artificial Intelligence Act (“EU AI Act”), dealing with the usage and regulation of AI. The EU AI Act also deals with necessary regulations concerning deepfakes. In 2023, the UK government introduced reforms in its Online Safety Act and for the first time, criminalised the sharing of deepfake intimate images.It also came up with amendments in its Criminal Justice Bill and criminalised creating horrific images without consent. Additionally, countries like the United States (Deepfakes Accountability Bill, 2023) and China (Artificial Intelligence Law of the People’s Republic of China) have also introduced Bills to label deepfakes on online platforms, failing which would attract a criminal sanction. https://clt.nliu.ac.in/?p=1097

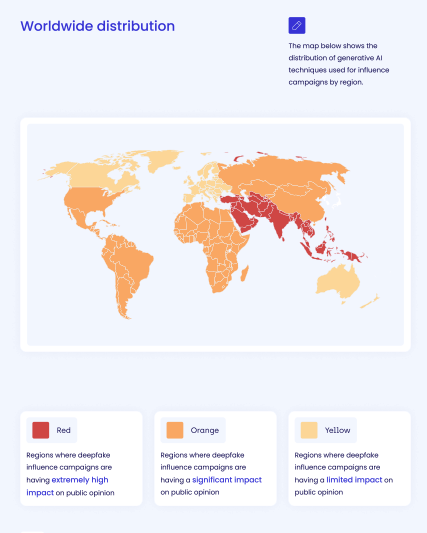

Countries across the world have adopted diverse regulatory, technological, and criminal-law strategies to address deepfakes, reflecting differences in governance structures, platform accountability regimes, human rights frameworks, and state capacity.[51][52][53] International approaches range from rights-based transparency models to prescriptive state control, offering a reference framework for India’s evolving regulatory approach.

European Union

The most detailed supranational definition appears in the European Union’s Artificial Intelligence Act (AI Act). Article 3(60) defines deepfakes as AI-generated or manipulated image, audio or video content that resembles existing persons, objects, places, entities or events and would falsely appear to a person to be authentic or truthful.[54]The Act mandates that such synthetic content be clearly disclosed as artificially generated or manipulated, unless the content is used for legitimate purposes such as law enforcement, national security, or journalistic activities subject to appropriate safeguards.[55]

European regulators treat deepfakes as a threat to electoral integrity, consumer protection, and media authenticity. During elections, the European Digital Media Observatory and national electoral authorities coordinate cross-platform monitoring of political content, allowing early identification of manipulated videos targeting specific candidates or demographic groups. Several member states, including France and Germany, have undertaken additional national measures requiring broadcasters to authenticate political advertisements and flag synthetic content. The EU’s approach is therefore not solely punitive but aims to build an integrated ecosystem that combines platform obligations, research partnerships, content authenticity standards, and public transparency reporting.

United States

The United States does not have a single federal statute governing deepfakes, but regulatory interest has increased significantly in recent years. The proposed NO FAKES Act would create a federal right of action against unauthorised digital replicas of an individual’s voice or likeness, targeting both deepfake impersonation and AI-based commercial exploitation.[56] While the bill remains pending, it reflects federal recognition that existing publicity and privacy laws may be insufficient to address AI-driven impersonation.

Several states have enacted their own laws, resulting in a mosaic of regulatory approaches. California’s AB 602 prohibits the non-consensual creation or distribution of AI-generated sexual imagery and allows victims to bring civil claims for damages.[57] Tennessee’s ELVIS Act strengthens protections for performers by explicitly outlawing unauthorised AI-based voice cloning.[58] Texas and Virginia have introduced election-specific deepfake laws that criminalise the circulation of manipulated political videos close to election periods if intended to mislead voters.

China

China regulates deepfake technology through the Cyberspace Administration of China’s 2022 Provisions on the Deep Synthesis in Internet based Information Services, which adopt an expansive definition of “deep synthesis.” The Provision refers to any technology that employs deep learning, virtual reality or any other generative or synthetic algorithm to produce text, images, audio, video, virtual scenes or other network information.[59]The Cyberspace Administration of China’s Provisions on Deep Synthesis Internet Information Services require all providers of deep synthesis tools to implement real-name verification for users, incorporate mandatory watermarking into AI-generated content, and undergo algorithmic security assessments before deployment. Platforms must monitor and label synthetic media at scale and are held directly responsible for the circulation of harmful or misleading manipulated content, even when produced by third-party users.[60]

China also imposes strict obligations on content distribution. Intermediaries must block or remove deepfake content that threatens social order, distorts political narratives, or impersonates government officials[61] China’s regulatory system therefore integrates identity verification, algorithm governance, platform liability, and state oversight in a unified framework.

United Kingdom

The United Kingdom has incorporated deepfake harms into its broader online safety regime through the Online Safety Act 2023. The Act introduces a specific criminal offence for distributing non-consensual deepfake intimate images, making the UK one of the first common-law jurisdictions to adopt a targeted deepfake offence.[62] Beyond criminalisation, the Act requires major platforms to take proactive steps to identify and remove harmful manipulated content, particularly sexualised material and content targeting children.

South Korea

South Korea adopts a criminal law–centred model that treats deepfake sexual content as a serious offence.[63] Amendments to the Act on Special Cases Concerning the Punishment of Sexual Crimes criminalise not only the creation and distribution of deepfake sexual images but also their possession and viewing.[64] This demand-side criminalisation aims to reduce the market for deepfake sexual imagery and deter online perpetrators more comprehensively than supply-side regulation alone.[65]

South Korea’s regulators collect detailed national statistics on image-based sexual abuse and include deepfake-related offences in official annual datasets. This data-driven approach has helped authorities identify trends, understand demographic impacts, and allocate resources to victim support and digital forensics. The country operates specialised cyber sexual violence response centres that assist victims in reporting deepfakes, securing rapid takedowns, and obtaining psychological and legal support.[66]

Denmark

In 2025, Denmark has become one of the first EU country to tackle deepfakes by expressly amending its copyright legislation, which proposes to grant to the individuals a copyright in their image and likeness followed by the Netherlands. The amendments grant natural persons an exclusive right to control the making available deepfakes of their persona in the context of neighbouring/related rights.

These amendments have an effect of explicit inclusion of personality/image rights within copyright law to regulate deepfakes, giving every individual an exclusive right to create a deepfake of their own. In other words, every person has a copyright in their digital likeness/identity. This approach again suffers from various legal pitfalls. https://spicyip.com/2025/11/mask-off-copyright-deepfakes-and-the-commodification-of-the-self.html

Appearance in official databases and Research Engagement

Indian governmental databases, national reporting portals, and civil-society research centres engage with deepfakes largely through indirect or umbrella categories, reflecting the fact that Indian criminal law does not recognise deepfakes as a standalone statutory offence. As a result, incidents involving synthetic or manipulated media appear in official systems only through broader cybercrime, impersonation, or obscenity categories rather than through a dedicated classification.[67][68][69]

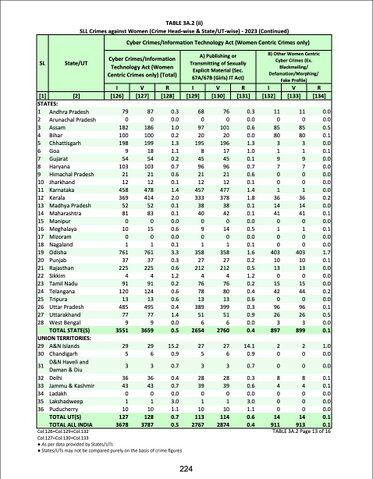

National Crime Records Bureau (NCRB)

The NCRB’s Crime in India report does not provide a discrete statistical category for deepfakes. Instead, deepfake-related incidents are recorded under headings such as forgery, identity theft, cheating by personation, harassment, or the publication or transmission of obscene electronic material.[70] Because of this aggregation, deepfake offences are statistically indistinguishable from other forms of cybercrime, making it difficult to measure their prevalence or identify patterns in victimisation, geographic dispersion, or prosecutorial outcomes. This absence of granularity contributes to policy blind spots, as lawmakers and enforcement agencies cannot determine whether current legal provisions under the IT Act or the BNS adequately capture the nature of synthetic media harms.[71] Moreover, police reports and FIRs often classify deepfake content as “morphing,” a term historically associated with manual photo editing rather than AI-generated manipulation, demonstrating the conceptual and terminological gap between law enforcement vocabulary and contemporary technological realities.[72]

https://www.dsci.in/resource/content/india-cyber-threat-report-2025

Esya Centre

The Esya Centre article argues that India’s current response to AI-generated synthetic content is largely reactive, relying on existing legal provisions and takedown mechanisms after harmful content has already circulated. It highlights the government’s response to a recent deepfake incident and notes that while existing laws on impersonation and intermediary liability apply, this approach depends heavily on individuals reporting violations. The piece contrasts this with the United States’ more proactive strategy under its AI Executive Order, which emphasises developing standards and tools for detecting, labeling, and authenticating synthetic content. The article suggests that India should adopt a similarly forward-looking framework by investing in technical detection capabilities, improving coordination across government agencies, and working with industry to build systems that enable early identification and clearer labeling of AI-generated content.[73]

Centre for Internet and Society (CIS)

The Centre for Internet and Society emphasises that deepfake abuse in India is deeply gendered, with non-consensual sexual deepfakes constituting a form of image-based sexual abuse. CIS studies show that women journalists, students, activists, and political actors are disproportionately targeted, often as a means of intimidation, silencing, or reputational harm.[74] The harms associated with such synthetic content are described as immediate and frequently irreversible, given the speed at which deepfake sexual imagery spreads across platforms and the persistence of content even after removal. CIS argues that reliance on notice-and-takedown procedures is inadequate, as content dissemination often outpaces platform response times. It recommends proactive detection systems, survivor-centric legal and psychological support, specialised police training, and stronger institutional coordination to ensure timely and effective remedies for victims.[75]

https://sensity.ai/reports/. https://5865987.fs1.hubspotusercontent-na1.net/hubfs/5865987/SODF%202024.pdf

https://www.entrust.com/resources/reports/identity-fraud-executive-summary

https://www.entrust.com/sites/default/files/documentation/reports/2025-identity-fraud-report.pdf

Reports by Indian digital rights commentators note that the widespread, low-cost availability of mobile applications and cloud-based interfaces has made deepfake creation accessible to non-experts, allowing realistic forgeries to proliferate on social media and encrypted messaging services with minimal technical skill.[76]

2023 State of Deepfakes: Realities, Threats, and Impact review https://www.securityhero.io/state-of-deepfakes/?form=MG0AV3

In the Pursuance of a Robust Legal Framework to Address Deepfake Harms: An Analysis of the Indian Legal Discourse (IJLT)

https://repository.nls.ac.in/cgi/viewcontent.cgi?article=1553&context=ijlt

https://tattle.co.in/blog/make-it-real/

Challenges

https://www.cppr.in/articles/deepfakes-doxxing-and-digital-abuse

Related Pages

Personality Rights

Moral Rights

Authorship

Personal Data

Intermediary

Criminal Defamation

Identity Theft

References

- ↑ US Department of Homeland Security, Increasing Threats of Deepfake Identities (2023) https://www.dhs.gov/sites/default/files/publications/increasing_threats_of_deepfake_identities_0.pdf accessed 14 March 2026.

- ↑ Tanmaya Nirmal 'Deepfakes in India: Legal Landscape, Judicial Responses, and a Practical Playbook for Enforcement'(NeGD, 29 September 2025) https://negd.gov.in/blog/deepfakes-in-india-legal-landscape-judicial-responses-and-a-practical-playbook-for-enforcement/

- ↑ Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2026

- ↑ Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules 2021 Amendment 2026 Section 2(wa)

- ↑ Section 2(wa) of Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules 2021 Amendment 2026

- ↑ Ministry of Electronics and Information Technology, Frequently Asked Questions (FAQs) on the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2026 (10 February 2026) https://www.meity.gov.in/static/uploads/2025/10/065b6deb585441b5ccdf8be42502a49c.pdf accessed 14 March 2026

- ↑ Article 3(60) EU Artificial Intelligence Act, 2024 provides ‘deep fake’ means AI-generated or manipulated image, audio or video content that resembles existing persons, objects, places, entities or events and would falsely appear to a person to be authentic or truthful;

- ↑ Shama Mahajan, 'Deepfake Regulation: Same Problem, Different Approaches yet none is an Error-free Resolution!' (SpicyIP, 2025) https://spicyip.com/2025/11/deepfake-regulation-same-problem-different-approaches-yet-none-is-an-error-free-resolution.html accessed 14 March 2026.

- ↑ Ministry of Electronics and Information Technology, 'Explanatory Note [Proposed Amendments to the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 in relation to synthetically generated information]' (22 October 2025) https://www.meity.gov.in/static/uploads/2025/10/8e40cdd134cd92dd783a37556428c370.pdf accessed 14 March 2026

- ↑ Akshat Agrawal, 'Unpacking MeitY’s Proposed IT Rules Amendments: Between Regulation and Practicality' (SpicyIP, 2025) https://spicyip.com/2025/10/unpacking-meitys-proposed-it-rules-amendments-between-regulation-and-practicality.html accessed 14 March 2026

- ↑ Vikram Raj Nanda, 'Regulating Artificially Generated Media: Unpacking the Amendments to the IT Rules' (SpicyIP, 2026) https://spicyip.com/2026/02/regulating-artificially-generated-media-unpacking-the-amendments-to-the-it-rules.html accessed 14 March 2026.

- ↑ Information Technology Act 2000, ss 67, 67A.

- ↑ Bharatiya Nyaya Sanhita 2023, s 356.

- ↑ George, A. (2024). Defamation in the Time of Deepfakes. Columbia Journal of Gender and Law, 45(1), 122–172. https://doi.org/10.52214/cjgl.v45i1.13186

- ↑ Tanvi Vishnoi, 'Deepfake Regulation & Rights' (SCC Online Blog, 8 November 2025) https://www.scconline.com/blog/post/2025/11/08/deepfake-regulation-rights/ accessed 14 March 2026.

- ↑ Bharatiya Nyaya Sanhita 2023, s 111.

- ↑ Vaishnavi Singh and Abhijeet Raj, 'Dissecting the Conundrum of Deepfake Technology and Artificial Intelligence in Light of the New Penal Laws of India' (CLT Blog, 2026) https://clt.nliu.ac.in/?p=1097 accessed 14 March 2026.

- ↑ Bharatiya Nyaya Sanhita 2023, s 336.

- ↑ Biranchi Naryan P. Panda, Isha Sharma, ‘Deepfake Technology in India and World: Foreboding and Forbidding’ (Asian Institute of Research, 16 July 2025) https://www.asianinstituteofresearch.org/lhqrarchives/deepfake-technology-in-india-and-world:-foreboding-and-forbidding

- ↑ Khushi Saraf and Akshay Sriram, 'The Dilemma of Deepfakes: Expanding the Ambit of Right to Personality to Regulate Deepfakes in India' (Law School Policy Review, 4 May 2024) https://lawschoolpolicyreview.com/2024/05/04/the-dilemma-of-deepfakes-expanding-the-ambit-of-right-to-personality-to-regulate-deepfakes-in-india/ accessed 14 March 2026.

- ↑ Abhay Singh, 'Personality Trademark in India: The New Unchained Wolf and Need to Regulate' (Bar & Bench, 23 January 2025) https://www.barandbench.com/columns/personality-trademark-in-india-the-new-unchained-wolf-and-need-to-regulate accessed 14 March 2026

- ↑ Isha Katiyar, 'Pixelated Perjury: Addressing India’s Regulatory Gaps in Tackling Deepfakes' (TechLaw Forum, 2026) https://techlawforum.nalsar.ac.in/pixelated-perjury-addressing-indias-regulatory-gaps-in-tackling-deepfakes/ accessed 14 March 2026.

- ↑ Naina Jhunjhunwala, 'The Deepfake Conundrum: Can the Digital Personal Data Protection Act, 2023 Deal with Misuse of Generative AI?' (IJLT Blog, 2026) https://forum.nls.ac.in/ijlt-blog-post/the-deepfake-conundrum-can-the-digital-personal-data-protection-act-2023-deal-with-misuse-of-generative-ai/ accessed 14 March 2026.

- ↑ Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules 2021 Amendment 2026 Section 3

- ↑ Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules 2021 Amendment 2026 Section 3

- ↑ Information Technology Act 2000.

- ↑ Bharatiya Nyaya Sanhita 2023.

- ↑ National Crime Records Bureau, Crime in India (Volume1, 2024)https://ncrb.gov.in/sites/default/files/CII-2023/CII2023Volume1.pdf

- ↑ Ministry of Electronics and Information Technology, ‘Advisory to Intermediaries Regarding Deepfakes’ (7 November 2023) <https://www.meity.gov.in/writereaddata/files/Advisory%20to%20Intermediaries%20regarding%20Deepfakes.pdf> accessed 26 November 2025.

- ↑ Information Technology Act 2000, Section 66D.

- ↑ Tanmaya Nirmal 'Deepfakes in India: Legal Landscape, Judicial Responses, and a Practical Playbook for Enforcement'(NeGD, 29 September 2025) https://negd.gov.in/blog/deepfakes-in-india-legal-landscape-judicial-responses-and-a-practical-playbook-for-enforcement/

- ↑ Information Technology Act 2000, s 66E.

- ↑ Archita Bhargava ‘Deepfake Technology and Its Legal Regulation in India: A Doctrinal and Comparative Study’ (Vintage Legal,27 November 2025 )https://www.vintagelegalvl.com/post/deepfake-technology-and-it-s-legal-regulation-in-india-a-doctrinal-and-comparative-study

- ↑ Dominika Kuźnicka-Błaszkowska, Nadiya Kostyuk, 'Emerging need to regulate deepfakes in international law: the Russo–Ukrainian war as an example,' (2025) 11(1) Journal of Cybersecurity 1, 5-7

- ↑ Ministry of Electronics and Information Technology, ‘Advisory to Intermediaries Regarding Deepfakes’ (7 November 2023) https://www.pib.gov.in/PressReleaseIframePage.aspx?PRID=1975445®=3&lang=2 Soumyarendra Barik, 'Centre issues advisory to social media platforms over deepfakes after viral ‘Rashmika Mandanna’ video' Indian Express ( New Delhi, Nov 8, 2023) https://indianexpress.com/article/business/centre-deepfake-advisory-to-social-media-platforms-9017283/

- ↑ Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules 2021, r 3(1)(b)(vii)

- ↑ Ministry of Electronics and Information Technology, ‘Advisory to Intermediaries Regarding Deepfakes’ (7 November 2023) https://www.pib.gov.in/PressReleaseIframePage.aspx?PRID=1975445®=3&lang=2

- ↑ Ministry of Electronics and Information Technology, ‘Advisory No. 2(4)/2023-CyberLaws-2’ (26 December 2023) https://www.meity.gov.in/writereaddata/files/Advisory%2026_12_2023.pdf

- ↑ Ministry of Electronics and Information Technology, ‘Advisory No. 2(4)/2023-CyberLaws-2’ (26 December 2023) https://www.meity.gov.in/static/uploads/2024/02/c9f89809b63d22656be38a166ef14949.pdf

- ↑ Press Information Bureau, ‘Government Warns Against Deepfakes; MeitY Issues Advisory to Social Media Platforms’ (7 November 2023) https://www.pib.gov.in/PressReleasePage.aspx?PRID=2154268

- ↑ Tanmaya Nirmal 'Deepfakes in India: Legal Landscape, Judicial Responses, and a Practical Playbook for Enforcement'(NeGD, 29 September 2025) https://negd.gov.in/blog/deepfakes-in-india-legal-landscape-judicial-responses-and-a-practical-playbook-for-enforcement/

- ↑ Standing Committee on Communications and Information Technology, ‘Review of Mechanism to Curb Fake News’ (17th Lok Sabha, 53rd Report, 2024) <https://loksabhadocs.nic.in/lsscommittee/Communications%20and%20Information%20Technology/17_Communications_and_Information_Technology_53.pdf> accessed 26 November 2025.

- ↑ MINISTRY OF INFORMATION AND BROADCASTING, REVIEW OF MECHANISM TO CURB FAKE NEWS ( TWENTY SECOND REPORT, 2025)

- ↑ MINISTRY OF INFORMATION AND BROADCASTING, REVIEW OF MECHANISM TO CURB FAKE NEWS ( TWENTY SECOND REPORT, 2025)

- ↑ MINISTRY OF INFORMATION AND BROADCASTING, REVIEW OF MECHANISM TO CURB FAKE NEWS ( TWENTY SECOND REPORT, 2025)

- ↑ MINISTRY OF INFORMATION AND BROADCASTING, REVIEW OF MECHANISM TO CURB FAKE NEWS ( TWENTY SECOND REPORT, 2025)

- ↑ Anil Kapoor v Simply Life India & Ors CS(COMM) 652/2023

- ↑ Amitabh Bachchan v Rajat Nagi & Ors CS(COMM) 819/2022

- ↑ K.S. Puttaswamy v Union of India (2017) 10 SCC 1.

- ↑ Anil Kapoor v Simply Life India & Ors CS(COMM) 652/2023

- ↑ Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act) OJ L 1689

- ↑ Cyberspace Administration of China, ‘Provisions on the Administration of Deep Synthesis Internet Information Services’ (2022) <http://www.cac.gov.cn/2022-12/11/c_1672221949318230.htm> accessed 26 November 2025.

- ↑ NO FAKES Act of 2024, S. 4875, 118th Cong (2024).

- ↑ Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act) OJ L 1689, Art 3(60)

- ↑ Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act) OJ L 1689, Art 3(60)

- ↑ NO FAKES Act of 2025

- ↑ California Assembly Bill No 602 (2019)

- ↑ Tennessee Code Annotated,Title 39, Chapter 14, Part 1 and Title 47 (2024).

- ↑ Provisions on the Administration of Deep Synthesis of Internet Information Services 2023, Article 23

- ↑ Provisions on the Administration of Deep Synthesis of Internet Information Services 2023, Article 6, 7, 9,11,12,14,16

- ↑ Provisions on the Administration of Deep Synthesis of Internet Information Services 2023, Article 6

- ↑ Online Safety Act 2023 (UK), s 188.

- ↑ Hyunsu Yim,'South Korea to criminalise watching or possessing sexually explicit deepfakes' (Reuters, 26 September 2024) https://www.reuters.com/world/asia-pacific/south-korea-criminalise-watching-or-possessing-sexually-explicit-deepfakes-2024-09-26/

- ↑ Act on Special Cases Concerning the Punishment of Sexual Crimes (South Korea), amended 2024.

- ↑ Act on Special Cases Concerning the Punishment of Sexual Crimes (South Korea), amended 2024.

- ↑ Act on Special Cases Concerning the Punishment of Sexual Crimes (South Korea), amended 2024.

- ↑ National Crime Records Bureau, Crime in India (Volume1, 2024)https://ncrb.gov.in/sites/default/files/CII-2023/CII2023Volume1.pdf

- ↑ National Cyber Crime Reporting Portal, ‘User Manual’ https://cytrain.ncrb.gov.in/staticpage/pdf/ReportandTrack.pdf

- ↑ Mohit Chawdhry , ‘A Proactive Approach to Dealing with Synthetic AI-Generated Content’ (Esya Centre ,14 November 2023)https://www.esyacentre.org/perspectives/2023/11/14/a-proactive-approach-to-dealing-with-synthetic-ai-generated-content

- ↑ National Crime Records Bureau, Crime in India (Volume1, 2024)https://www.ncrb.gov.in/uploads/files/1CrimeinIndia2023PartI.pdf

- ↑ Information Technology Act 2000; Bharatiya Nyaya Sanhita 2023.

- ↑ National Crime Records Bureau, Crime in India (Volume1, 2024)Page 224https://www.ncrb.gov.in/uploads/files/1CrimeinIndia2023PartI.pdf

- ↑ Mohit Chawdhry , ‘A Proactive Approach to Dealing with Synthetic AI-Generated Content’ (Esya Centre ,14 November 2023)https://www.esyacentre.org/perspectives/2023/11/14/a-proactive-approach-to-dealing-with-synthetic-ai-generated-content

- ↑ Amrita Sengupta and Yesha Tshering Paul, ‘Consultation on Gendered Information Disorder in India’ (Centre for Internet and Society, 6 May 2024) https://cis-india.org/internet-governance/blog/consultation-on-gendered-information-disorder-in-india

- ↑ Amrita Sengupta and Yesha Tshering Paul, ‘Consultation on Gendered Information Disorder in India’ (Centre for Internet and Society, 6 May 2024) https://cis-india.org/internet-governance/blog/consultation-on-gendered-information-disorder-in-india

- ↑ Archita Bhargava ‘Deepfake Technology and Its Legal Regulation in India: A Doctrinal and Comparative Study’ (Vintage Legal,27 November 2025 )https://www.vintagelegalvl.com/post/deepfake-technology-and-it-s-legal-regulation-in-india-a-doctrinal-and-comparative-study